Academia

“They should never have given us uniforms if they didn’t want us to be an army”: Media in a Time of Crisis

Image Posted on Updated on

This keynote was delivered at the the 2019 Literature/Film Association Conference, held in the beautiful city of Portland, Oregon. I am grateful to the Board of the Literature/Film Association and its membership for the opportunity to deliver these remarks.

As I sat down to write this keynote over the summer, I found it difficult to concentrate. When I wanted to immerse myself into the work of researching the state of the field or drafting some ideas, I instead found myself scrolling through Twitter, looking to see what new horrors were unleashing themselves around the world. Is it extreme weather destroying a small coastal village in Alaska, another mass shooting in someone’s hometown, the immolation of the Amazon forests, another hurricane, or perhaps America’s ongoing human rights violations at the Southern border? It’s all of those things, and much, much more, all the time, every day. There is no day when things seem better, or when there doesn’t seem to be a crisis. Of course, the world has always been this bad, it’s just that more of us—people like me, who haven’t ever really found ourselves at the mercy of bad actors—are finally starting to take notice. To notice is to be distracted. To notice is to feel angry. I feel angry every day, all day long. And I know I’m not alone. These are angry times.

So what do we do with our anger in 2019? When is it productive to allow yourself to be distracted by the world, that is, to be fully and completely angry, and when is that anger just so much screaming into the void? More important to the goals and purposes of the people sitting in this room right now: how might the very field in which we all work provide a pragmatic conduit for our righteous anger? Is it possible that the texts which are so often reviled for their seeming lack of creativity—texts that are reused, recycled, rebooted, and adapted across platforms—could it be that these are texts most suited to times of crisis? In other words, at what point does our anger with the world, our expertise in literary and media studies, and our desire to do something, anything, converge?

Today I want to offer one possible point of convergence: the spring 2019 issue of Feminist Media Studies. This issue builds on the work of contemporary feminist scholars and writers like Sarah Ahmed, Rebecca Traister, and Brittney Cooper and their analysis of the revolutionary power of anger. Issue editor Jilly Kay Boyce’s introduction opens by recognizing that “Women’s anger has for so long been cast as unreasonable, hysterical, as the opposite of reason and that this anger is inextricable from feminism. This is why the anger of women has, for so long, made so many people uncomfortable. Today, Rosalind Gill argues, later in the same issue, feminism has “a new luminosity in popular culture.” But, she cautions, the visibilities of these feminisms remain uneven. For example, as Brittney Cooper explains, black and brown women have historically been policed by respectability politics and culturally determined norms of propriety, as well as the need to manage the self according to the limited standards ascribed to their bodies. “Rage and respectability,” Cooper notes, “cannot coexist.” Black feminists are asked to deny their own rage if they wish to be taken seriously, nevertheless Cooper argues in favor of the “eloquence” of rage.

Jilly Kay Boyce and Sarah Banet-Weiser further build on these ideas in their essay, “Feminist anger and feminist respair.” “Respair,” is a 15th century word meaning “a recovery from despair.” Boyce and Banet-Weiser advocate for the value of respair because it acknowledges the tangled relationship between hope and despair. It is “a hope that comes out of brokenness,” an optimism necessary to channel our outrage. We’re angry because we expect more from the world, and we expect more from the world because we still retain the hope that it can be made better. Another way to think of respair is what science and technology scholar Donna Haraway refers to as “staying with the trouble.” She is worth quoting at length:

Alone, in our separate kinds of expertise and experience, we know both too much and too little, and so we succumb to despair or to hope, and neither is a sensible attitude. Neither despair nor hope is tuned to the senses, to mindful matter, to material semiotics, to mortal earthlings in thick copresence.

In my remarks today I want to further explore this ideas of anger, respair, and “staying with the trouble” as they relate to the central to the theme of this conference: Reboot, Repurpose, Recycle. I believe the act of repetition and repurposing in the media, in the form of sequels, remakes, and cycles, is one way that we, as consumers and scholars, can stay with the trouble. When we return to the same story, told over and over and over, across time and across media platforms, and when that story feels timely (and it often does), we are choosing to stay with the trouble articulated in this repeated story, and the anger it generates. I think the anger we’re all feeling right now in this moment is, indeed, a productive affect– it just needs to take a form people can recognize.

The Handmaid and Feminist Respair

To that end, I want to explore how this moment of shared anger can be usefully embodied, not by new stories and new ideas, but by texts that already exist in the cultural imagination, texts which are instantly recognizable in their semantics, but hauntingly resonant in their syntax, and ultimately, I believe, politically productive in their pragmatics. Multiplicities can supply the form in which our collective anger can take root and be shared and collectively understood. My case study for this particular exploration is a television series, itself an adaptation of a 1985 novel, which was also made into a 1990 movie, that has become a locus of respair since it premiered in 2017: The Handmaid’s Tale. The Handmaid’s Tale describes a dystopian future in which birth rates have plummeted and the land, air, and water is literally toxic. In response to these apocalyptic developments, a totalitarian, Christian theocracy seizes control of the United States, renaming it “Gilead,” after the holy city. Among the many heinous policies instituted by the government of Gilead, the worst is its enforced classification of women into categories: as wives (who are docile and obedient), as Marthas (who do all the cooking and housework in homes), as Aunts (asexual school marms, only with rape) and as Handmaids (fertile women who are raped under the auspices of God’s will and the greater good). Handmaids are forcibly removed from their homes and families, are stripped of their children, and expected to be obedient receptacles of the seed of the wealthy men who run the government.

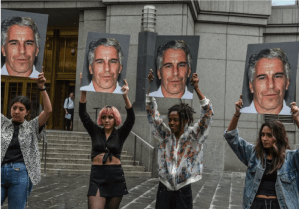

Although America in 2019 is no Gilead (yet), the image of the handmaid has, over the last few years, become a symbol of protest, of women being visibly angry in public. As Shani Orgad and Rosalind Gill argue in the aforementioned issue of Feminist Media Studies, “Against the consistent containment, policing, muting and outlawing of the expression of women’s anger in media and culture, the current moment, specifically in the wake of the #MeToo movement, seems to represent a radical break.” Thus, the handmaid costume is most frequently used to call attention to reproductive justice and attempts to limit it, but it has also been deployed to protest sexual assault, or really any moment when a woman (however she may define herself) feels like she is not sovereign over her own body. In recent years, protestors have donned the iconic handmaid’s costume to protest gender-based inequality in the United States, Argentina, United Kingdom, and Ireland. The Mary Sue called the costume “a symbol of protest” while The Guardian described it as a, “potent medium for dissent.”

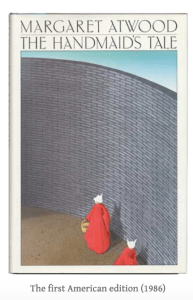

But before red robes marched into DC to denounce Brett Kavanaugh’s nomination to the Supreme Court, or to protest the restrictive “Fetal Heartbeat Bills” in Georgia, Ohio, and Missouri, they were words on a page in Margaret Atwood’s 1985 novel. Here is the first time Atwood mentions the Handmaid’s uniform, just a few pages into The Handmaid’s Tale:

Everything except the wings around my face is red: the color of blood, which defines us. The skirt is ankle-length, full, gathered to a flat yoke that extends over the breasts, the sleeves are full. The white wings too are prescribed issue; they are to keep us from seeing, but also from being seen. (Atwood 8)

This initial description is short but vivid: the red and white cloth, the concealment of the body, the way the wings of the headpiece obscure the handmaid’s vision so that she can only look at what’s right in front of her. The costume is a literalization of the handmaid’s only value—her fertility, the red blood of her womb.

When Atwood’s book was first published, this now-iconic handmaid costume was often the cover illustration, its simplicity lending itself to easy recognizability. However, there were no protests featuring the costume in 1985. In 1990, when a film adaptation of the book starring Natasha Richardson was released, the costume didn’t show up in protests, either. It stayed firmly within the confines of Atwood’s fictional world. So why now? More specifically, how has the rebooted, repurposed and recycled image of the handmaid in contemporary popular become a vehicle for feminist respair? A major reason, of course, is the current state of American politics. As media historian Heather Hendershot writes in her analysis of the Hulu series, “If [Atwood’s] original novel was the perfect allegorical response to the Reagan years, and continues to resonate today, the online series speaks quite precisely to the Trump moment.” The Handmaid’s Tale premiered just after Donald Trump was sworn in as the 45th president of the United States, and four months after a recording of Trump bragging about sexually assaulting women was leaked to the public. But Trump isn’t the only reason why the handmaid reverberates so strongly. I believe the resonance of the handmaid costume right here, right now, is only possible because The Handmaid’s Tale is also an example of a contemporary media multiplicity, a text that has been adapted and remade and shared and remixed. The text’s proliferation is what supplies it with power.

What are Multiplicities?

Before we continue down this path, I want to take some time to define a term I’ll use throughout this talk: “multiplicities.” Multiplicities are any pop culture texts that appear in multiples— including adaptations, sequels, remakes, trilogies, reboots, preboots, series, spin-offs, and cycles. As media industries scholar Jennifer Holt explains, the consolidation of TV networks, film studios, music studios, and print media into just a handful of conglomerates means it is increasingly difficult to discuss media platforms as discrete industries, “we must view film, cable and broadcast history as integral pieces of the same puzzle, and parts of the same whole.” Or, as Nicholas Carr, author of The Shallows writes, “Once information is digitized, the boundaries between media dissolve.” Indeed, as it becomes more and more difficult to discuss media like film, television, and streaming content as separate entities, we need a critical term that allows us to discuss these texts all together. In light of the ever-increasingly transmedial nature of contemporary screen cultures—and the fluid way in which texts move from page to screen to tablet to video game and back again–the term “multiplicities” offers cohesive ways for discussing transgeneric groupings, and theorizing the complicated ties between text, audience, industry, and culture. Read all about in this anthology, co-edited with Barton Palmer. The most central trait of multiplicities is that they refuse to end, insisting that no texts have firm limits; stories can be constantly be told, retold, and spread. The study of multiplicities is the study of audiences—of ourselves—of why we (collectively) seek out a version of the same story (or character or subject) over and over again

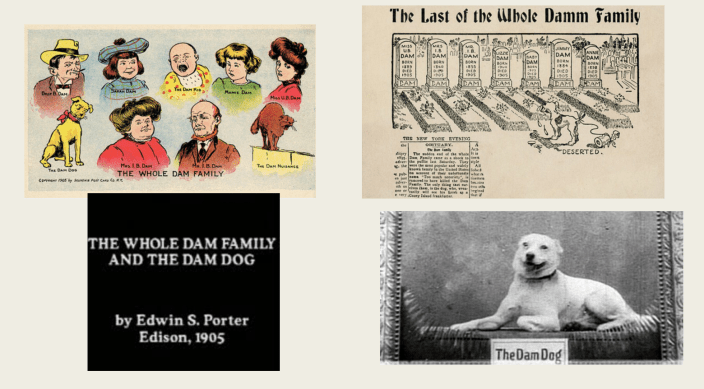

Although film and television seem to be dominated by multiplicities at the moment, it’s a production strategy as old as the cinema itself. In his study of films produced in the years 1902-1903, film historian Tom Gunning found that a large percentage of films were based on plots and characters familiar from other forms of popular entertainment. Multiplicities have always existed, but we haven’t always known it, particularly since we are not always primed to spot the source text when it was intended to be understood by a very different set of viewers (See, for example, the whole dam family and the dam dog, a novelty postcard that inspired several short films). The practice of basing films off of comic strips, magic lantern shows, popular songs, postcards, as well as other films, and then replicating those successful formulas over and over until they cease to make money has been foundational to the origins and success of filmmaking worldwide.

Still, another reason why it might feel like we have more multiplicities today than ever before is because the terms we use to discuss multiplicities, like remake and reboot, emerged at different moments in media history. For example, historian Jennifer Forrest’s research into early cinematic remakes found before the Copyright Statute of 1906, individual films were seen as commodities, not as “works of art.” Unauthorized imitations, such as Edison’s remake of the Lumiere Brother’s famous film, Arrival of the Train, titled The Black Diamond Express, were common practice before 1906 and were not, legally at least, labeled as “remakes.” In other words, early cinema was filled with remakes, reboots, sequels, and adaptations, we just didn’t name them as such.

Yet another reason why it feels like we have more multiplicities now than we did in the past is due to the short shelf life of Classical Hollywood Films prior to the popularity of TV in the 1950s. Because films were generally screened once and then never seen again, it was difficult to recognize a remake when it appeared. For example, Thomas Leitch discusses how Warner Bros released 3 films based on the same intellectual property: Dashiell Hammet’s hard-boiled novel, The Maltese Falcon. In a 10 year period, Warner Bros released 1931’s Maltese Falcon, 1936’s Satan Met a Lady &1941’s Maltese Falcon. They were not described as remakes at the time of their release, and were not recognized as such. In the past the links between texts were either ignored or actively hidden. But by 1960, 90% of American homes had at least one television, and this sharp increase in TV ownership generated a need for more TV content. One solution was to purchase film studio’s back catalogs of films, and soon TV stations were flooded with thousands of previously shelved titles. This shift is important to the history of multiplicities because, as Con Verevis argues, American viewers could now see films that had been out of circulation for decades, which gave them the opportunity to more directly compare different iterations of the same intellectual property. Consequently, audiences became more aware when different forms of entertainment were adaptations or remakes or reboots or sequels or prequels of something else they have read or watched or heard before.

Verevis further argues that we are now in an era of postproduction, a transformed media culture that arose in response to a combination of forces—conglomeration, globalization, and digitization. The market is flooded with content that can be consumed on a multitude of platforms, often with a single click. Whereas one might have needed to screen dozens of movies, from different decades and cultures, to catch all of the movie references in a Quentin Tarantino film, today it is possible to Google them in the span of a few minutes. We are all more media literate than ever before because it is easier to be media literate.

Repetition and Bad Texts

Postproduction has incentivized media conglomerates to increasingly rely on a culture of repetition, replication, sequelization and rebooting. Nevertheless, this production model is often equated with the dwindling of creativity and the bastardization of the ancient art of filmmaking. In just the last month, the DC/Marvel multiverses, The Lion King “live action” remake, and the recently announced plans to reboot the 1990 hit, Home Alone, have all been the subject of hyperbolic criticism from audiences and critics. When discussing multiplicities, critics use terms like “plague” and “bombardment,” like these texts which we are all free to consume or ignore, or consume and ignore, are coming for us whether we like it or not. A recent article in The Guardian is representative of this discourse “Hollywood…seems determined to serve up a relentless platter of regurgitated and recycled fare. And it’s slowly making large portions of its audience sick.”

It’s not just contemporary critics sounding the alarms about multiplicities; the decades-old writings of post-modern thinkers like Jean Baudrillard, Guy DeBord and Fredric Jameson seem to have predicted our current media landscape. For example, Jameson places the remake in the larger category of pastiche, an affectless or neutral imitation of another text, arguing that cultural production “can no longer look directly out of its eyes at the real world for the referent but must, as in Plato’s cave, trace its mental image of the world on its confining walls.” Multiplicities are still described in these terms—reductive, confining, even dangerous. It’s hard not to look at the state of contemporary media multiplicities and fear the worst.

Many of the complaints about multiplicities are rooted in the assumption that this frequently recycling of familiar plots and characters must mean that media producers are lazy, using the easiest route to achieve financial success. Critiques of multiplicities argue that a movie or television series that unabashedly courts the audience’s desires is somehow less artful, less complex, or less worthwhile than one that exists to thwart, complicate, or comment on those audience desires—or that the audience is somehow being exploited, or manipulated into spending money on an undeserving piece of art. As Pierre Bourdieu argued in Distinction, a study of taste in 1960s France, bad or maligned art objects are most frequently those texts whose pleasures are easily accessed and immediately apparent, thus confirming the superiority of those who appreciate only the “sublimated, refined, disinterested, gratuitous, distinguished pleasures forever closed to the profane.” Bourdieu argues that the lower and working classes are not predisposed to view art objects with detachment since their livelihoods depend on a constant, active engagement with the material world. Scholars of the melodrama and soap opera also note that this ranking of what is a guilty pleasure and what has its roots in classed & gendered prejudice; designations of taste work to keep certain ideas, images and texts, and audiences, in “their place.” As Michael Newman and Elana Levine argue in their study of the legitimation of television, “There is nothing intrinsically unimaginative about continuing a story from one text to another. Because narratives draw their basic materials from life, they can always go on, just as the world goes on. Endings are always, to an extent, arbitrary. Sequels exploit the affordance of narrative to continue” When critics complain about Hollywood’s lack of creativity, these complaints are rooted in a distaste for those who seek out these texts, and gain pleasure from them.

Multiplicities are also viewed with suspicion because they refuse to end, denying the kind of closure necessary in or at least desirable for literary forms in which the material object of the book determines forms of storytelling. Multiplicities insist that no texts have firm limits — that any story can be retold, reconfigured, and spread around. Why is this troublesome? Because a text without end is a text that never relinquishes its hold on our time. If the text never ends, we could, hypothetically, watch the text forever, and then, how would we ever get anything else done?

This fear was acknowledged as early as 2011, when the sketch comedy series Portlandia depicted a couple getting sucked into the DVD box set of Battlestar Galactica, foregoing all other obligations, including going to work and using the toilet. The joke here is that it’s absurd to get so entranced in a television series, but also, we may not be able to control ourselves. In a 2013 New Yorker essay, Ian Crouch attributed the shame associated with binge-watching to “the discomfiting feeling of being slightly out of control—compelled to continue not necessarily by our own desire or best interests but by the propulsive nature of the shows themselves.” An unspoken emotion in these thinkpieces is shame, the shame of being out of control, the shame of indulging in repetition.

Repetition is associated with a dulling of the senses, of something masturbatory and excessive, or something simplistic, like a child who always asks to read the same book at bedtime. And sometimes multiplicities feel that way, reflecting the way we feel about ourselves in a world that our brain can’t comprehend, despite or perhaps because of the sheer amount of data we have streaming into our phones at all hours of the day. We might feel lazy and ineffectual and indulgent when we sit around binge-ing a TV series. But, the effect of binge watching The Handmaid’s Tale, a TV series that forces the viewer to be immersed in its terrible world of rape and silencing and violence, is to produce a viewer who is afterwards exhausted, sad, and possibly fuming. I think that’s a good place to be right now. Bear with me here: what if we viewed retreating to our screens as staying with the trouble, instead of running from it? Which brings me back to The Handmaid’s Tale, which I promised earlier.

When some people binge watch The Handmaid’s Tale, while others read the book, and still others just see the memes online, they may be consuming different media, but they are experiencing the same story. The red robes and white caps serve as a shared point of contact, a unit of cultural transmission, and a link between disparate groups of people. Multiplicities offer that possibility of this shared culture, of connecting with someone we’ve never met. When we see handmaids lounging near the Lincoln Memorial or standing solemnly outside the Alabama courthouse, we are being asked to stay with the trouble, to see the allegoric repression of Gilead overlaid on our real, living breathing world. This connection is not just made by me, but by anyone who has the seen the series, or heard of it, or read the newspaper or just scrolled thru social media. Though repetition has negative connotations, it is precisely the repetition of images that supplies their power; their proliferation ensures that they cannot be ignored or dismissed. As new media scholar Jean Burgess argues, memes are a powerful medium of social connection due to their spreadability. Memes are propagated by being taken up and used in new works, in new ways, and therefore are transformed on each iteration –what Burgess terms a “copy the instructions,” rather than “copy the product” model of replication and variation. The subject of many memes, the image of handmaid is also spreadable, and its spreadability forms of the crux of its power.

For example two years ago, for Halloween I decided to dress up as a Handmaid, complete with a red dress and cloak, and a large, vision-obstructing white bonnet. I wore the costume to work, because I taught on Halloween, and also out to some Halloween parties that weekend. What struck me, both on my college campus but also while out in my community, were the emotional reactions my costume evoked. When people recognized who I was—and that moment happened almost instantly—there was amusement, followed by a kind of fury. The joy and the anger rested side by side. And this affect was only possible because the image is so widely known, because it is a multiplicity.

Far from robbing fans of access to novelty and personal connection, contemporary media multiplicities can generate strong emotional resonance and a creative flourishing both within and among fans. Every new Thor and Captain America is a new visit with the same character, which has in turn been molded to resonate with the world as it is happening. The aura, seemingly absent from texts that repeat the form and content of previous texts, is invented anew in every retelling, tweet, meme, and cosplay. I would go so far as to say that these conglomerate, multiplatform, transmedia stories—the Boy who lived, the fight against the Dark Side, the battle over who shall rule Wakanda—may be the only way for our culture to have a shared cultural touchstone. At a time when we’re no longer reading the same newspapers (and most newspapers are gone anyway), or even believing in the same set of facts (like whether or not a hurricane will hit Alabama) all at the same time, media multiplicities may be our only remaining watercooler moments. Our ability to take note of the multiplicities around us—that so many stories are simply retellings of stories we already know—provides us with a shared narrative, a collective symbol for articulating what is happening around us.

Conclusions

In the season 1 finale of The Handmaid’s Tale, the protagonist, Offred describes a similar moment of wordless connection that occurs among the handmaids. She explains:

There was a way we looked at each other at Red Center. For a long time I couldn’t figure out what it was exactly. That expression in their eyes. In my eyes. Because before, in real life, you didn’t ever see it. Not more than a glimpse. It was never something that could last for days. It could never last for years…

This voice over is the audio counterpoint to scenes from Offred’s life at the Red Center, where she and the other fertile women of Gilead are being trained, often violently, for their future roles as handmaids. The episode then cuts to the present day, to Offred in her handmaid uniform, just after she commits an act of rebellion by smuggling a package of letters, written by imprisoned handmaidens across Gilead. It’s a small rebellion, the first of many that Offred will attempt during her time in Gilead. “It’s their own fault,” she muses out to herself, “They should never have given us uniforms if they didn’t want us to be an army.” The replicated red robes of the handmaids, intended to render them as a mass of faceless babymakers, as opposed to individual women of agency, has had the opposite effect. Or rather the effect created by the shared uniform opposes the docility that Gilead had hoped they would confer on the handmaidens. Here, sameness isn’t a demoralizing force, it’s an organizing force. It doesn’t make them the same, it makes them united. This is a crucial difference.

This keynote is a call for scholars and fans and audiences alike to recognize the roles of media multiplicities, those denigrated, unoriginal, texts, not just to our field, but to the work of justice and good citizenship. Rather than feel guilt over our desire to experience the same story, over and over, instead we should watch and feel that moment of connection with all those other people who have watched, will watched, and are watching the same thing. This is not a turning away from society, but a turning towards it, to other people. Shared stories mobilize us, and help us to see what we have in common with those around us. There is power in an image that evokes collective rage over injustice, that gathers mass as it’s shared. Or to quote Offred, “they never should have given us multiplicities if they didn’t want us to be an army.”

Bibliography

Burgess, Jean. “‘ALL YOUR CHOCOLATE RAIN ARE BELONG TO US’?: Viral Video, YouTube and the Dynamics of Participatory Culture.” Video Vortex Reader: Responses to YouTube. Amsterdam: Institute of Network Cultures. 101-109.

Carr, Nicholas. The Shallows: What the Internet is Doing to Our Brains. WW Norton, 2011.

Child, Ben. “Don’t Call it a Reboot: How Remake Became a Dirty Word in Hollywood.” The Guardian, 24 Aug, 2016.

Cooke, Carolyn Jess. Film Sequels: Theory and Practice from Hollywood to Bollywood, (Edinburgh: Edinburgh University Press, 2009)

Cooper, Brittney. Eloquent Rage: A Black Feminist Discovers Her Superpower. New York: St. Martin’s Press, 2018.

Constandinides, Costas. From Film Adaptation to Post-Celluloid Adaptation: Rethinking the Transition of Popular Narratives and Characters across Old and New Media. New York: Continuum. 2010.

Crouch, Ian. “Come Binge with Me.” The New Yorker, 18 Dec 2013.

Dusi, Nicola. “Remaking as Practice: Some Problems of Transmediality.” Cinéma & Cie 12.18: 115–27. 2012.

Flynn, Daniel J. “Reboots & Remakes Ruin Hollywood.” The American Spectator. 14 Dec, 2018.

Forrest, Jennifer and Leonard R. Koos’ Dead Ringers: The Remake in Theory and Practice. Albany: SUNY Press, 2002.

Gill, Rosalind “Post-postfeminism?: new feminist visibilities in postfeminist times,” Feminist Media Studies, 16:4, 610-630 2016.

Haraway, Donna. Staying with the Trouble: Making Kin in the Chthulucene. Durham: Duke University Press, 2016.

Hendershot, Heather. “The Handmaid’s Tale as Ustopian Allegory: ’Stars and Stripes Forever, Baby.” Film Quarterly. 72.1 (2018): 13-25.

Hills, Matt. Fan Cultures. London: Routledge. 2002.

Horton, Andrew and Stuart McDougal (1998) (eds.), Play it Again Sam: Retakes on Remakes. London: University of California Press.

Jenkins, Henry. Convergence Culture: Where Old and New Media Collide. New York: NYU Press, 2008.

Jenkins, Henry. “If It Doesn’t Spread, It’s Dead (Part One): Media Viruses and Memes.” Confessions of an Aca/Fan. 11 Feb 2009.

Kane, Vivian. The Team Behind Hulu’s The Handmaid’s Tale Are Proud of the Costume’s Place In Protest Culture” https://www.themarysue.com/handmaids-tale-protest-uniform/

Kay, Jilly Boyce. “Introduction: anger, media, and feminism: the gender politics of mediated rage,” Feminist Media Studies, 19:4, 591-615 2019.

Kay, Jilly Boyce & Sarah Banet-Weiser. “Feminist anger and feminist respair,” Feminist Media Studies, 19:4, 603-609. 2019.

Klein, Amanda and R. Barton Palmer, Eds. Multiplicities: Cycles, Sequels, Remakes and Reboots in Film & Television. : University of Texas Press. 2016.

Lavigne, Carlen and William Proctor, Eds. Remake Television: Reboot, Re-use, Recycle. Plymouth: Lexington Books, 2014.

Leitch, Thomas. “Twice-Told Tales: Disavowal and the Rhetoric of the Remake,” in Jennifer Forrest and Leonard R. Koos (eds.), Dead Ringers: The Remake in Theory and Practice. Albany: State University of New York Press. 2002.

Loock, Kathleen and Constantine Verevis (2012). Film Remakes, Adaptations, and Fan Product ions: Remake/Remodel. Basingstoke: Palgrave Macmillan.

Maccabee, Colin, Kathleen Murray, and Rick Warner, eds. True to the Spirit: Film Adaptation and the Question of Fidelity

Orgad, Shani & Rosalind Gill (2019) “Safety valves for mediated female rage in the #MeToo era,” Feminist Media Studies, 19:4, 596-603

Perkins, Claire and Constantine Verevis, “Introduction: Three Times,” in Film Trilogies: New Critical Approaches, eds. Claire Perkins and Constantine Verevis, (Houdsmills: Palgrave Macmillan, 2011).

Proctor, William. 2012, February ‘Regeneration and Rebirth: Anatomy of the Franchise Reboot.’ Scope: An Online Journal of Film and Television Studies 22: 1–19.

Rutledge, Pamela B. “Binge-Watching: If You’re Feeling Ashamed, Get Over it.” Psychology Today. 28 Jan 2016

Verevis, Constantine. Film Remakes. Edinburgh: Edinburgh University Press. 2006.

Wood, Helen. (2019) “Fuck the patriarchy: towards an intersectional politics of irreverent rage,” Feminist Media Studies, 19:4, 609-615

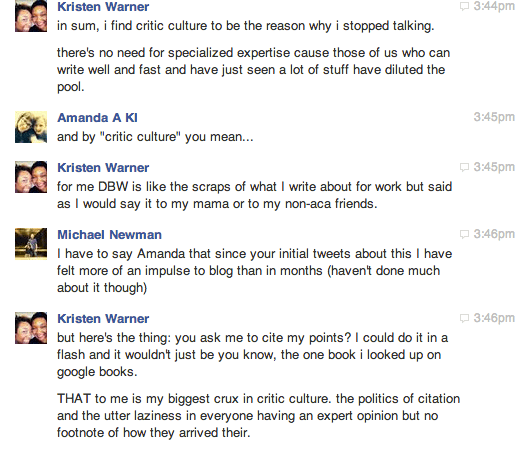

Erasing the Pop Culture Scholar, One Click at a Time

Hello darlings.

I am super pleased to have this piece published over at The Chronicle of Higher Education on the need for well-researched pop culture writing. Yes, even in thinkpieces. Also pleased to have co-authored this with my dear pal, Kristen Warner, who is a big smartypants.

Read, enjoy, make snarky comments about how this article means we are elitists [shrugs]:

Click here to read.

Starving the Beast: The UNC System in 2015

I’m writing this post from inside a bunker. Outside the storm is raging but I’m hunkered down, amongst my canned goods and bottled water, waiting for the end of the UNC system to arrive. Many of my fellow professors may be looking out their windows right now, and the sun is shining and the birds are singing. But I assure you, in North Carolina, the sky is falling. Fast.

I have been a professor at a state university in North Carolina for the last 8 years. This is the longest I’ve ever held the same job—or any job for that matter. This was my first job out of graduate school, the golden ticket. I was finally on the tenure track!

At the time of my job offer, I was especially excited to join the UNC system, widely considered the “jewel” of North Carolina:

“The [UNC] university system has not only educated thousands, it has been an economic engine, helping spawn entrepreneurs such as Jim Goodnight and Dennis Gillings, attracting huge research grants and making the Research Triangle Park possible.

It was not a given that a largely poor, rural state such as North Carolina would create a great university system. It took a sustained effort by generations of business and civic leaders to make it so.”

There are 17 universities that exist under the UNC umbrella, a move deemed, in 1971, to be a “political miracle” and which ultimately “turned the state of North Carolina into a national leader in higher education, and in the process, transformed the state into one of the most prosperous in the South.” And yes, I felt a true sense of pride when I accepted a job here in 2007. In fact, I had two job offers in 2007 (oh 2007, you were great!): one from Greenville, North Carolina and one from outside Detroit, Michigan. In 2007, North Carolina seemed like an infinitely more attractive choice based solely on the local economy, the amount of higher education funding it appeared to be receiving (at least at my hiring institution), and the overall prestige of the UNC system.

In 2007, pre-Recession academia was failing, but no faster than many other long-standing institutions. And even during the 2008 Recession, when all North Carolina state employees took a paycut and travel money was slashed and low-enrollment classes were cut, the waist cinching felt reasonable and necessary and collegial, like we were all doing our part to muddle through the Recession together. Truly, this was the attitude in 2008. The budget cuts were temporary, we were told. Things will get better, we were told. We just needed to sit tight and be patient and wait for the economy to improve, we were told. Then? Everything would go back to normal.

I’ll admit that I watched these heavy cuts tear through my university from the frazzled standpoint of a pre-tenure mother of two young children. I knew that things were bad, but my biggest concern wasn’t salary compression or gendered wage inequality or the exploitation of fixed term faculty (all major problems at my university). No, to be honest, my biggest concerns during those years were purely selfish: finishing my book and getting more than 3 consecutive hours of sleep at a time. I was exhausted and detached, like so many of my colleagues. We all knew that the cuts seemed unfair but we also knew that things would likely get better soon.

Well, they didn’t. In fact, things got much worse. In 2011, former UNC System President Erskine Bowles warned of the dangers inherent in the radical cuts that were being made to the state’s university system:

“We not only have to provide an education that is as free from expense as practicable, but we’ve also got to provide a high-quality education…I’ve always believed that low tuition without high quality is no bargain for anyone. It is not a bargain for the student, and it is not a bargain for the taxpayer”

Bowles’ words have turned prophetic. The North Carolina legislature’s short term money-saving cuts have had longterm negative impacts on public education in this state. Here are just a few (and trust me, there are far too many to list here):

- In 2013 the NCGA voted to stop offering pay raises to K-12 public teachers who earn a Masters degree, a move which discourages professional development. In an ironic twist, enrollments in my department’s Masters program, a program which catered to many local teachers looking to improve their pedagogy and their salary, have dropped significantly. A true lose/lose scenario for NC’s teachers and students.

- North Carolina has more Historically Black Colleges and Universities (HBCUs) than any other state in the country (11) but cuts to higher ed funding in the state are preventing many students from being able to attend these schools. As a result, HBCUs like North Carolina Central University and Elizabeth City College are seeing significant drops in enrollments. Johnny Taylor, president of the Thurgood Marshall College Fund, argues that enrollment declines are particularly devastating for HBCUs. He explains:“If you take a school like N.C. A&T, where 50 percent of the country’s undergraduate black engineers come to one school, it would seriously impact our workforce diversity initiative if that school didn’t exist.” Indeed, Elizabeth City College, which lost 10% of its funding in 2013-2014, has been teetering on the edge of being shut down all together.

- Faculty who can leave, do leave. For example, one of my colleagues, a dear friend and a brilliant scholar, left our department in 2011 in order to chair another English department. He simply could not support his family on the salaries paid to English faculty. Shortly after leaving our department this colleague made an historic discovery, was profiled in The New York Times, and was awarded a research fellowship at Harvard. When you don’t compensate your best faculty, they leave. End of story.

- Faculty, like me, who have thus far been unable to secure employment outside of North Carolina, have only had a 1.2% pay increase since 2008. That means that tenured faculty like me, who have 8 years of experience teaching the university’s student body and who have won teaching and research awards, are actually earning less today than we did when we were first hired 8 years ago. We are being penalized for our experience and our dedication to the university. I have been explicitly told that the only way I will see a raise is if I snag a competing job offer. Of course, a female colleague of mine did get a competing job offer, in an effort to raise her salary, and my university basically told her to have a nice life. So she’s still in NC, making what I make.

- Funding for travel has been scaled back or eliminated all together. For example, I have been planning to attend a prestigious academic conference in Ireland this summer, during which I present research from my next book project. Attending conferences like these are essential for scholars to gain feedback and share their research with others. But, there are no more international travel grants, as I just learned recently when I tried to apply for one:

- Fixed term instructors are losing contracts at an alarming rate.

- All faculty are being asked to teach more classes, filled with more students, for no additional compensation.

The list goes on and on.

But, here are some things the North Carolina General Assembly did vote for recently:

- allowing guns on school grounds

- tax breaks on yacht sales

- $41,000 tax breaks for those making $1 million or more per year

- restrictive voter registration rules

- requiring middle school teachers to discuss “sensitive and scientifically discredited abortion issues with students.”

But perhaps what is most offensive in this climate of austerity and sacrifice is when the UNC Board of Governors recently recommended salary increases for the UNC system’s highest paid employees, including presidents and chancellors:

“Under the new parameters, the salary for a UNC president would match that for chancellors at the two flagship research campuses – UNC-Chapel Hill and N.C. State University. The levels could range from a minimum of $435,000 to a maximum of $1 million, with the more likely market range of $647,000 to $876,000”

Maybe I’m biased, but I was always under the impression that the most important commodity at a university is the quality of its teaching staff, not the quality of its administrators. When I graduated from college in 1999 I didn’t think back fondly on all the administrators I had encountered along the way. So what explanation could possibly be given for proposing that university administrators deserve “competitive salaries” but the university’s teachers don’t? Why is the Board of Governors only concerned with attracting and retaining top administrative talent, not top teaching or research talent?

What, exactly, is going on? My university, you see, is very slowly being converted from an institution of education into a business. “Can’t it be both?” some of you might be thinking? “Wouldn’t academia, that dying giant, benefit from trimming the fat and keeping an eye towards pleasing the customer?” The answer, as someone who has slowly watched her university transition from a university into a Wal Mart over the last 8 years, is a resounding no.

Now, I can’t speak for every professor in the UNC system but I can tell you that I’ve witnessed these practices firsthand and they are destroying this university by slowly sucking the lifeblood out of its faculty. That metaphor may feel hyperbolic but trust me, it’s accurate. Indeed, it is the very metaphor employed by North Carolina’s own policy makers.

Back in February 2011, Jay Schalin of the Art Pope Center (which is essentially North Carolina’s own personal Koch Brothers), wrote an article entitled “Starving the Academic Beast,” which details the ways NC can “restore the 17 campus University of North Carolina system to its proper size and role.”

So the stated goal of the Art Pope Center is, indeed, to “starve the beast.” But one has to wonder: since the North Carolina State constitution states that “The General Assembly shall provide that the benefits of The University of North Carolina and other public institutions of higher education, as far as practicable, be extended to the people of the State free of expense” AND since public education has consistently proven to be the one enterprise that consistently provides a significant return on investment, then what THE HELL are these people doing and WHY are they doing it? Do they simply wish to starve the “beast” down into a nice, lean fighting weight? No, my friends, they want us to starve to death. And they are succeeding.

It’s also worth pointing our that 3 of the 10 most gerrymandered districts in the country are right here in North Carolina:

That means we will likely have to accept the current policies (which only seem to be getting worse and worse) until at least 2016. This sad state of affairs has been incredibly demoralizing for me and for my colleagues. Many folks are resigned to the way things are, shrugging their shoulders and saying, “Why fight? There’s nothing to be done.” Fixed term faculty, whose precarity renders them most vulnerable to the whims of our legislature and, ironically, makes them least able to speak up about it, are worried about paying their bills. As my former colleague, John Steen, a visiting professor in my department who, despite being a brilliant scholar and talented teacher, must now pursue a different line of work, writes:

“Currently, over 52 percent of ECU faculty are fixed-term, and that number’s rising. This means that over half of the ECU faculty can leave the classroom after final exams uncertain of whether they’ll return for the next semester. Nationwide, 31 percent of part-time faculty earn near or below the federal poverty level. For raising a family, paying rent, and for supporting students throughout their ECU careers, that’s not nearly enough.”

Tenure track faculty, who have yet to submit their tenure materials, are worried about jeopardizing their one chance at academic job security (most of us on the tenure track are aware that we are lucky to have any job at all). We’re too scared to speak and too scared to be silent.

Well, that’s not exactly true.

As a result of these draconian policies, which both implicitly and explicitly seek to “starve” the great beast that is public education in North Carolina, some of us have started to get mad. And we’re trying to make some noise. And a lot of us have tenure, the one thing, so far, that the General Assembly hasn’t taken away.

So this post is for you, my tenured friends. We are small in number—indeed, we are an endangered species. But I am calling on you now to speak up and get involved. Talk to your colleagues, your department chairs and your deans. Write letters to the editor of your local paper and to your student paper. Write to your legislators. Talk to your students about this–if anyone should be outraged about having to pay more tuition for less and less instruction it should be them.

But really, shouldn’t this outrage all of us? An affordable public education is the promise we made, collectively, as a state, many decades ago. We pay taxes for this education. This university system belongs to US, the people of North Carolina. So why are we allowing this wonderful, economy-boosting, prestige-raising, research-generating “beast” to starve? Are we really helpless? Have we really been stripped of all options? No. Because as long as we can speak and type and scream, we can fight. Won’t you join us?

Plagiarism, Patchwriting and the Race and Gender Hierarchy of Online Idea Theft

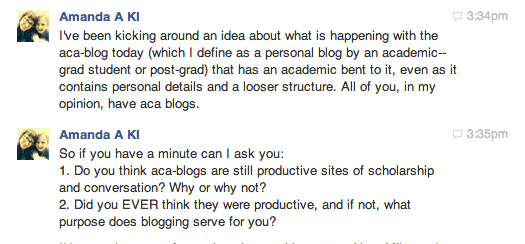

Several months ago I published a 2-part guide to the academic job market right here on my blog (for free!!!!!!!!!!), as a way to help other academics explain this bizarre, yearly ritual to family and friends. Indeed, several readers told me that the posts really *did* help them talk to their loved ones about the academic job market (talking about it is the first step!). Yes, I’m working miracles here, folks. And then, this happened:

“A few months ago, as I was sitting down to my morning coffee, several friends – all from very different circles of my life – sent me a link to an article, accompanied by some variation of the question: “Didn’t you already write this?” The article in question had just been published on a popular online publication, one that I read and link to regularly, and has close to 8 million readers.

Usually, when I read something online that’s similar to something I’ve already published on my tiny WordPress blog, I chalk it up to the great intellectual zeitgeist. Because great minds do, usually, think alike, especially when those minds are reading and writing and posting and sharing and tweeting in the same small, specialized online space. I am certain that most of the time, the author in question is not aware of me or my scholarship. It’s a world wide web out there, after all. Why would someone with a successful, paid writing career need to steal content from me, a rinky-dink blogger who gives her writing away for free?

But in this case, the writer in question was familiar with my work. She travels in the same small, specialized online space that I do. She partakes of the same zeitgeist. In fact, she had started following my blog just a few days after I posted the essay that she would later mimic in conceit, tone and even overall structure.

Ethically speaking, idea theft is just as egregious as plagiarism, especially when those ideas are stolen from free sites and appropriated by those who actually make a profit from their online labor.

When pressed on this point, the writer told me that she does read my blog. She even had it listed on her own blog’s (now-defunct) blogroll. But she denied reading my two most recent posts, the posts I accused her of copying. Therefore she refused to link to or cite my blog in her original piece, a piece that generated millions of page views, social media shares, praise and, of course, money, for both her and the publication for which she is a columnist.

So if a writer publishes a piece (and profits from a piece) that is substantially similar to a previously published piece, one which the writer had most certainly heard of, if not read, is this copyright infringement? Has this writer actually done something wrong?”

Well, Christian Exoo and I decided to try to find out. To read our article “Plagiarism, Patchwriting and the Race and Gender Hierarchy of Online Idea Theft” at TruthOut, click HERE.

Oh Captain, my Therapist!

Note: a big thanks to Vimala Pasupathi for the constructive conversations that culminated in this post.

If you are a college-level educator, you have most likely experienced the following scenario: a once-promising student stops attending class or turning in her assignments. You know this student, her work ethic and temperament, and thus, her uncharacteristic behavior concerns you. You send the student several email inquiries — gentle nudges about upcoming assignments, reminders that her grade is free-falling, offers to chat during your office hours. Finally, the student shows up in your office looking wan and shaken. She tells you she’s been having trouble getting up in the morning. The thought of leaving her bed exhausts her. She has no energy. She can’t concentrate. She is missing all of her classes, not just yours. She is in danger of failing the entire semester and losing her financial aid and if she loses her financial aid, she tells you, she’ll have nowhere to live. She looks at you, with tears in her eyes, grateful to finally have someone to talk to. It’s clear that this is the first time she’s articulated these spiraling fears to anyone out loud. “What should I do?” she asks you, and she means it. She wants you to tell her what she should do.

According to a 2012 survey conducted by the National Alliance on Mental Illness (NAMI), 64% of students polled said they dropped out of college for a mental-health related reason. A 2013 poll conducted by the Association for University and College Counseling Center Directors found that the top mental health concern among college students was anxiety (41.6%), followed by depression (36.4 percent) and relationship problems (35.8 percent). These numbers, apparently, have been on the rise since the mid-1990s, and Psychology Today’s Gregg Henriques believes it has become a full-scale crisis: the College Student Mental Health Crisis (CSMHC). These claims are not news to those of us who work with college students every day. Every year more and more students miss classes, entire semesters and even drop out of school due to mental health issues. And those are just the students who openly discuss their mental health struggles. Many more remain silent and thus, undiagnosed and therefore, untreated.

These statistics are certainly troubling for professors who work with these students on a daily basis. But, perhaps, just as troubling are the increased responsibilities piled on to the already overburdened instructor, a responsibility which no one is talking about. At the same time that universities are asking more and more of faculty in terms of assessment, recruitment and program development (on top of teaching, service and gasp! research), professors are now increasingly finding themselves in the position of playing armchair psychologist to their students. For those of us who work at universities catering to low-income, first-generation, or non-white college students, the odds that these students will have undiagnosed mental health struggles is even greater. Yet most faculty working today are not provided with the resources (in terms of training, time or, most importantly, financial compensation) to competently deal with this crisis in student mental health. And make no mistake: this has, for better or worse, become our responsibility. Paul Farmer, chief executive of Mind, believes:

Higher education institutions need to ensure not just that services are in place to support mental wellbeing, but that they proactively create a culture of openness where students feel able to talk about their mental health and are aware of the support that’s available.

Yes, today the college instructor frequently finds herself in the difficult position of having to simultaneously play the role of psychiatrist, family counselor, financial advisor, and life coach, all while having to make very real, very difficult decisions about the student’s academic future. The standard advice from the university is to send the student to their mental health services, but these campus centers often have very long waits and/or find themselves underfunded and understaffed. As Arielle Eiser reports:

College counseling centers are frequently forced to devise creative ways to manage their growing caseloads. For example, 76.6 percent of college counseling directors reported that they had to reduce the number of visits for non-crisis patients to cope with the increasing overall number of clients.

More often than not, recommending that the student head to a campus counseling center means simply passing the buck. In my personal experiences at least, that student will disappear from campus, becoming one of the 64% who leave college due to mental health issues.

As an academic advisor my job is to shepherd a group of students through their English major — they must meet with me each semester to discuss their schedule, their progress towards graduation, and their academic standing. Each semester I get a list of student names, along with their registration code for the next semester (a process which ensures that students must meet with me prior to registering for classes). It always breaks my heart when I look at that list of advisees and see the ones with no registration code next to their names. These are the students who have not re-enrolled for the semester. These are the students I have lost.

If only I had checked in on that student after our last tearful meeting. If only I taken the time to make sure she was still going to class, turning in her work, registering for her next semester. A single email, hastily written and sent, might have been the difference between staying in or dropping out. These are the kinds of emails my best self sends, the self I wish I were all the time, but which I am only when my deadlines are met, my children are healthy, and I’m caught up on Downton Abbey. These unmade choices torture me because they exist as possibilities, reminding me of everything I might have done and didn’t. My job and salary don’t depend on sending those emails. Therein lies the rub. When students fail and drop out of the system, who is to blame? It’s the student, sure, but it’s also those of us who are tasked with advising them. And it is this unpaid, unmarked labor that becomes “key” to student retention, a job which has, quite suddenly, been shuffled onto my already very full plate.

So much of the labor expected of faculty today, both on and off the tenure track, is unmarked and unpaid. As our salaries stagnate, our job descriptions inflate exponentially. Although middle management, the dreaded Associate Deans, has skyrocketed over the last few years, it’s ironic that faculty are being asked to take on more and more of the management burden. Our department chairs no longer assess our research, service and teaching contributions. Instead, we assess ourselves and turn in those documents in to our chair, who then quickly rifles through our summaries, offering us arbitrary numbers meant to represent our achievements. The university no longer assesses the value of our individual programs. Instead, we assess our programs — through Byzantine rubrics and committees and “objectives” — and then turn these documents in to our middle-management overlords for quick perusals. The university is no longer tasked with recruiting new students to our programs. No, that is now my responsibility, despite the fact that I have no training in marketing or recruitment. I am expected to spend my work hours (the hours for which I pay for childcare) pitching English courses to community college students or thinking of sexier ways to describe my courses to undeclared majors. And then, if my classes don’t fill up? Yeah, that’s my fault. And I’m told I have to tach freshman composition.

Almost every week I receive a new email announcing the formation of yet another subcommittee on which I am supposed to volunteer to serve. I should volunteer, you see, because we all need to pitch in together and help! We’re a team! Almost daily I receive an email inviting me to attend another training workshop that will show me how to better assess my program or better manage the time that is increasingly being taken up with deleting emails inviting me to time management seminars. There is simply not enough time.

So how do I help my anxious, depressed, spiraling-out-of-control students when I don’t even know how to help myself with these problems? If I ignore the students’ cries for help, their mental health is compromised. If I help them, mine is compromised. This zero-sum game involves just me and the students. One of us is going to lose and right now, it’s both of us.

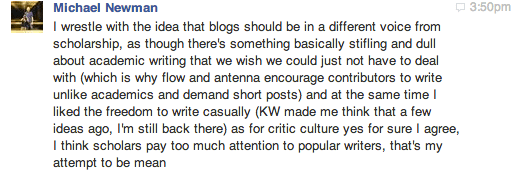

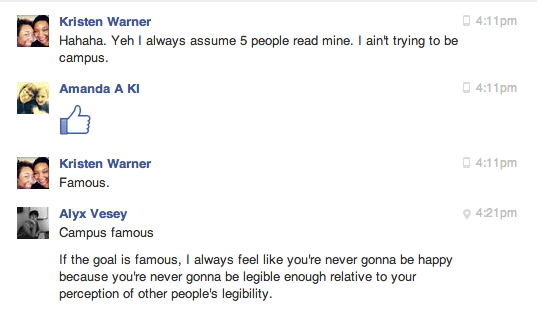

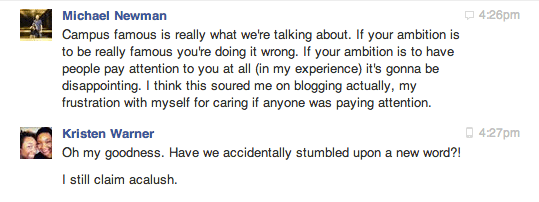

In Defense of Academic Writing

Academic writing has taken quite a bashing since, well, forever, and that’s not entirely undeserved. Academic writing can be pedantic, jargon-y, solipsistic and self-important. There are endless think pieces, editorials and New Yorker cartoons about the impenetrability of academese. In one of those said pieces, “Why Academics Can’t Write,” Michael Billig explains:

Throughout the social sciences, we can find academics parading their big nouns and their noun-stuffed noun-phrases. By giving something an official name, especially a multi-noun name which can be shortened to an acronym, you can present yourself as having discovered something real—something to impress the inspectors from the Research Excellence Framework.

Yes, the implication here is that academics are always trying to make things — a movie, a poem, themselves and their writing — appear more important than they actually are. These pieces also argue that academics dress simple concepts up in big words in order to exclude those who have not had access to the same educational expertise. In “On Writing Well,” Stephen M. Walt argues:

jargon is a way for professional academics to remind ordinary people that they are part of a guild with specialized knowledge that outsiders lack…

This is how we control the perimeters, our critics charge; this is how we guard ourselves from interlopers. But, this explanation seems odd. After all, the point of scholarship — of all those long hours of reading and studying and writing and editing — is to uncover truths, backed by research, and then to educate others. Sometimes we do that in the classroom for our students, of course, but even more significantly, we are supposed to be educating the world with our ideas. That’s especially true of academics (like me) employed by public universities, funded by tax payer dollars. That money, supporting higher education, is to (ideally) allow us to contribute to the world’s knowledge about our specific fields of study.

http://ougaz.wordpress.com/about/

So if knowledge-sharing is the mission of the scholar, why would so many of us consciously want to create an environment of exclusion around our writing? As Steven Pinker asks in “Why Academics Stink at Writing”

Why should a profession that trades in words and dedicates itself to the transmission of knowledge so often turn out prose that is turgid, soggy, wooden, bloated, clumsy, obscure, unpleasant to read, and impossible to understand?

Contrary to popular belief, academics don’t *just* write for other academics (that’s what conference presentations are for!). We write believing that what we’re writing has a point and purpose, that it will educate and edify. I’ve never met an academic who has asked for help with making her essay “more difficult to understand.” Now, of course, some academics do use jargon as subterfuge. Walt continues:

But if your prose is muddy and obscure or your arguments are hedged in every conceivable direction, then readers may not be able to figure out what you’re really saying and you can always dodge criticism by claiming to have been misunderstood…Bad writing thus becomes a form of academic camouflage designed to shield the author from criticism.

Walt, Billig, Pinker and everyone else who has, at one time or another, complained that a passage of academese was needlessly difficult to understand are right to be frustrated. I’ve made the same complaints myself. However, this generalized dismissal of “academese,” of dense, often-jargony prose that is nuanced, reflexive and even self-effacing , is, I’m afraid, just another bullet in the arsenal for those who believe that higher education is populated with up-tight, boring, useless pedants who just talk and write out of some masturbatory infatuation with their own intelligence. The inherent distrust of scholarly language is, at its heart, a dismissal of academia itself.

Now I’ll be the first to agree that higher education is currently crippled by a series of interrelated and devastating problems — the adjunctification and devaluation of teachers, the overproduction of PhDs, tuition hikes, endless assessment bullshit, the inflation of middle-management (aka, the rise of the “ass deans”), MOOCs, racism, sexism, homophobia, ablism, ageism, it’s ALL there people — but academese is the least egregious of these problems, don’t you think? Academese — that slow nuanced ponderous way of seeing the world — we are told, is a symptom of academia’s pretensions. But I think it’s one of our only saving graces.

The work I do is nuanced and specific. It requires hours of reading and thinking before a single word is typed. This work is boring at times — at times even dreadful — but it’s necessary for quality scholarship and sound arguments. Because once you start to research an idea — and I mean really research, beyond the first page of Google search results — you find that the ideas you had, those wonderful, catchy epiphanies that might make for a great headline or tweet, are not nearly as sound as you assumed. And so you go back, armed with the new knowledge you just gleaned, and adjust your original claim. Then you think some more and revise. It is slow work, but it’s necessary work. The fastest work I do is the writing for this blog, which as I see as a space of discovery and intellectual growth. I try not to make grand claims for this blog, mostly for that reason.

The problem then, with academic writing, is that its core — the creation of careful, accurate ideas about the world — are born of research and revision and, most important of all, time. Time is needed. But our world is increasingly regulated by the ethic of the instant. We are losing our patience. We need content that comes quickly and often, content that can be read during a short morning commute or a long dump (sorry for the vulagrity, Ma), content that can be tweeted and retweeted and Tumblred and bit-lyed. And that content is great. It’s filled with interesting and dynamic ideas. But this content cannot replace the deep structures of thought that come from research and revision and time.

Let me show you what I mean by way of example:

Stanley has already taken quite a drubbing for this piece (and deservedly so) so I won’t add to the pile on. But I do want to point out that had this profile been written by someone with a background in race and gender studies, not to mention the history of racial and gendered representation in television, this profile would have turned out very differently. I’m not saying that Stanley needed a PhD to properly write this piece, what I’m saying is: the woman needed to do her research. As Tressie McMillan Cottom explains:

Here’s the thing with using a stereotype to analyze counter hegemonic discourses. If you use the trope to critique race instead of critiquing racism, no matter what you say next the story is about the stereotype. That’s the entire purpose of stereotypes. They are convenient, if lazy, vehicles of communication. The “angry black woman” traffics in a specific history of oppression, violence and erasure just like the “spicy Latina” and “smart Asian”. They are effective because they work. They conjure immediate maps of cognitive interpretation. When you’re pressed for space or time or simply disinclined to engage complexities, stereotypes are hard to resist. They deliver the sensory perception of understanding while obfuscating. That’s their power and, when the stereotype is about you, their peril.

Wanna guess why Cottom’s perspective on this is so nuanced and careful? Because she studies this shit. Imagine that: knowing what you’re talking about before you hit “publish.”

Or how about this recent piece on the “rise” of black British actors in America?

Carter’s profile of black British actors in Hollywood does a great job of repeating everything said by her interview subjects but is completely lacking in an analysis of the complicated and fraught history of black American actors in Hollywood. And that perspective is very, very necessary for an essay claiming to be about “The Rise of the Black British Actor in America.” So what is someone like Carter to do? Well, she could start by changing the title of her essay to “Black British Actors Discuss Working in Hollywood.” Don’t make claims that you can’t fulfill. Because you see, in academia, “The Rise of the Black British Actor in America” would actually be a book-length project. It would require months, if not years, of careful research, writing, and revision. One simply cannot write about hard-working black British actors in Hollywood without mentioning the ridiculous dearth of good Hollywood roles for people of color. As Tambay A. Obsenson rightly points out in his response to the piece:

Unless there’s a genuine collective will to get underneath the surface of it all, instead of just bulletin board-style engagement. There’s so much to unpack here, and if a conversation about the so-called “rise in black British actors in America” is to be had, a rather one-sided, short-sighted Buzzfeed piece doesn’t do much to inspire. It only further progresses previous theories that ultimately cause division within the diaspora.

But the internet has created the scholarship of the pastless present, where a subject’s history can be summed up in the last thinkpiece that was published about it, which was last week. And last week is, of course, ancient history. Quick and dirty analyses of entire decades, entire industries, entire races and genders, are generally easy and even enjoyable to read (simplicity is bliss!), and they often contain (some) good information. But many of them make claims they can’t support. They write checks their asses can’t cash. But you know who CAN cash those checks? Academics. In fact, those are some of the only checks we ever get to cash.

Academese can answer those broad questions, with actual facts and research and entire knowledge trajectories. As Obsensen adds:

But the Buzzfeed piece is so bereft of essential data, that it’s tough to take it entirely seriously. If the attempt is to have a conversation about the central matter that the article seems to want to inform its readers on, it fails. There’s a far more comprehensive discussion to be had here.

A far more comprehensive discussion is exactly what academics have been trained to do. We’re good at it! Indeed, Obsensen has yet to write a full response to the Buzzfeed piece because, wait for it, he has to do his research first: “But a black British invasion, there is not. I will take a look at this further, using actual data, after I complete my research of all roles given to black actors in American productions, over the last 5 years.” Now, look, I’m not shitting all over Carter or anyone else who has ever had to publish on a deadline in order to collect a paycheck. I understand that this is how online publishing often works. And Carter did a great job interviewing her subjects. Its a thorough piece that will certainly influence Buzzfeed readers to go see Selma (2015, Ava DuVernay). But it is not about the rise of the black British actor in America. It is an ad for Selma.

Now don’t get me wrong, I’m not calling for an end to short, pithy, generalized articles on the internet. I love those spurts of knowledge, bite-sized bits of knowledge. I may be well-versed in film and media (and really then, only my own small corner of it) but the rest of my understanding of what’s happening in the world of war and vaccines and space travel and Kim Kardashian comes from what I can read in 5 minute intervals while waiting for the pharmacist to fill my prescription. My working mom brain, frankly, can’t handle too much more than that. And that is how it should be; none among us can be experts in everything, or even a few things.

But here’s what I’m saying: we need to recognize that there is a difference between a 100,000 word academic book and a 1500 word thinkpiece. They have different purposes and functions and audiences. We need to understand the conditions under which claims can be made and what facts are necessary before assertions can be made. That’s why articles are peer-reviewed and book monographs are carefully vetted before publication. Writers who are not experts can pick up these documents and read them and then…cite them! In academia we call this “scholarship.”

No, academic articles rarely yield snappy titles. They’re hard to summarize. Seriously, the next time you see an academic, corner them and ask them to summarize their latest research project in 140 characters — I dare you. But trust me, people — you don’t want to call for an end to academese. Because without detailed, nuanced, reflexive, overly-cited, and yes, even hedging writing, there can be no progress in thought. There can be no true thinkpieces. Without academese, everything is what the author says it is, an opinion tethered to air, a viral simulacrum of knowledge.

Understanding Your Academic Friend: Job Market Edition Part II

A few weeks ago I published part I of my 2-part post on the academic job market. I decided to break the post into two because when you write something like “part I of my 2-part post” it makes you sound important, like you have a real plan. Are you not impressed? These posts represent my attempts to translate the harrowing experience of applying for tenure track positions in academia in simple, easy-to-understand terms (and gifs) so that you, my dear suffering academic, can avoid this conversation with your Nana during Christmas dinner:

Nana: “Didja get that teachin’ job yet?”

You: “No, Nana, I’m still waiting to hear about first round interviews.”

Nana: “First round wha? I SAID: Didja get that teachin’ job yet?”

You: “No.”

Nana: “Boscovs is hirin'”

And then you go to Boscovs and grab an application because, you know, Boscovs!

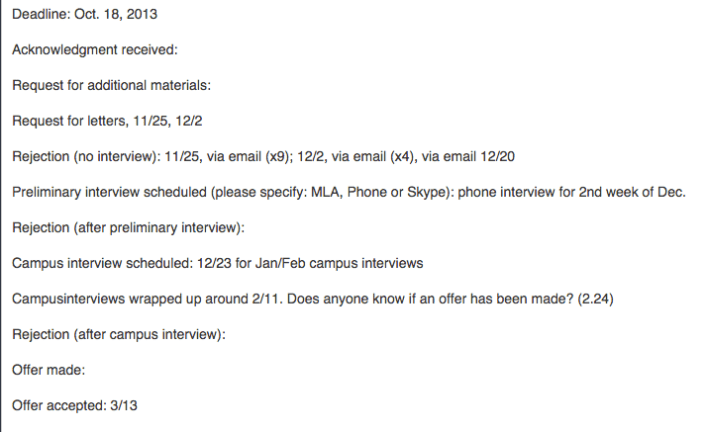

So where were we? I believe the last time we spoke, I was telling you all about the dark sad month of December, when most of your academic friends on the job market have hit peak Despair Mode. They’ve already sunk their heart and soul into those job applications and though they’ve likely heard *nothing* from the search committees yet, the Wiki gleefully marches forward with a parade of “MLA interviews scheduled!” and “campus interviews scheduled!.” So your friend, the job candidate, is going to be depressed, anxious and hopeful, all at the same time. Thus, your primary job during the month of December is to keep your friend very intoxicated and very far away from the Wiki. Can you handle that?

Preparing for the Conference Interview

Assuming your sad friend was able to schedule some first round interviews and assuming he has recovered from his massive December hangover, the next step in the job market process is interview prep. First, a word on the conference interview. Not every academic field requires job candidates to attend their annual conference for a face-to-face first round interview (like I mentioned in my last post, many schools have started offering the option of first round phone or Skype interviews as a substitute), but still, many many departments prefer to conduct first round interviews in the flesh. For folks who live within driving distance of these conferences and for whom the conference is always a yearly destination, the face-to-face interview is actually a great thing: being able to look the search committee in the eye as you speak (are they bored? excited? offended?) helps you gauge your answers and your tone. I, for one, think I’m much better in person than over the phone.

But, unfortunately, loads of folks don’t have the funds to attend these annual conferences *just* to interview for a single job. This is especially problematic because many search committees don’t contact candidates about conference interviews until a few weeks (or even a few days!) before the interview. If you ever tried to buy a plane ticket a few days before your departure date you know that this is prohibitively expensive. For example, one year I scored a first round MLA interview when it was being held in Los Angeles. The plane ticket cost me over $400, plus the cost of one night in a hotel and taxis, etc. It’s hard to imagine another field in which the (already financially strapped) job candidate must pay hundreds of dollars just to interview. Later I found out that some of the other candidates for the same job had requested (and received) first round interviews via Skype. When I ended up making it to the next and final round of that particular search, I wondered, briefly, if it was because I had been so willing to fork over $400 in order to have a shot at a single interview. This is just one example of how academia perpetuates a cycle of poverty and privilege. But I digress…

Where were we? Oh yes, preparing for the conference interview. Usually my tactic is to study the research profile of every member of the search committee, study the make up of the department and its courses, and compile a list of every possible question I might be asked during the 30 minute interview. Then I print all of that info onto note cards and spend the remaining days and hours leading up to the interview whispering sweet nothings over those notecards.

Attending the Conference Interview

If you are like me (and most academics I know), you really hate wearing a suit. It’s an outfit that communicates “I am not supposed to be wearing this but I put it on for you, Search Committee.” I own 3 suits and they all remind me of defeat.

http://www.corbisimages.com/

After donning your weird interview suit you head to the hotel where your interviews are being held. This is possibly the worst part of the conference interview: a lobby filled with shifty, big-suit-wearing, sullen academics who are all doing the same thing you’re doing: freaking the fuck out. The air is thick with perspiration but also something more ineffable than that, a pheromone possibly, that signals to everyone around you that your soul has been compromised. The stakes are so high (it’s your only interview in this job season!), the competition so great (all of these people are smart!), that the gravitas of the room feels wholly out of control but also wholly reasonable. You breathe in the fear of your cohort as you step into the crowded MLA elevators (so famous they have their own Twitter account) and that fear cloud follows you as you march down the carpeted hallway of the Doubletree Hotel, counting off room numbers until you reach the one containing your search committee. Often, as you’re about to knock, the previous job candidate is walking out. It’s very important that you try not to make eye contact with this individual or else you risk getting sucked into their vortex of anomie (pictured below):

image source:

http://williamlevybuzz.blogspot.com/

Now begins the oddest part of the conference interview: being alone in a hotel room with a group of punchy, overly caffeinated search committee members you’ve never met before. You may need to perch on a bed during the interview. Some members of the search committee may go to the potty in the middle of your schpiel on how you “flipped” your classroom or had your students teach you or whatever pedagogical bullshit is currently in vogue. Time will move much faster than you think it can and before you know it, your conference interview (the one you paid $400 for) is over. You nervously shake hands and slink out the door, trying to avoid eye contact with the sweaty mess waiting in the hall. Now you wait…

The Campus Interview

It may take days, weeks or possibly months, but eventually someone will contact you to say that you did not make it to the next round, thank you very much for your time, we wish you luck in your job search, etc. But, maybe, just maybe, you are one of the lucky few who moves on to the final round of the search: the campus interview! At this point the pile of candidates has been whittled down from 200-400 to just 3 or 4 candidates. I have been on a total of 8 campus interviews in my life and they run the gamut from positively delightful (swank hotel, great meals, gracious department members) to the miserable (the time I was told I’d be eating all of my meals on a 2-day interview “on my own” [except one] because the Search Committee was…too busy to eat with me? I saved all my receipts from the food court, trust me). But campus interviews generally include the following:

- Q & A with the Search Committee

- A teaching demonstration, followed by Q & A

- A research presentation, followed by Q & A

- Meet and greets with students

- Meeting with the dean/provost/generic white male in expensive suit who is way too busy to be meeting with you

- A tour of the campus

- Classroom visits

- Meeting with real estate agent/ tour of town

- Group meals with various department members