In Defense of Academic Writing

Academic writing has taken quite a bashing since, well, forever, and that’s not entirely undeserved. Academic writing can be pedantic, jargon-y, solipsistic and self-important. There are endless think pieces, editorials and New Yorker cartoons about the impenetrability of academese. In one of those said pieces, “Why Academics Can’t Write,” Michael Billig explains:

Throughout the social sciences, we can find academics parading their big nouns and their noun-stuffed noun-phrases. By giving something an official name, especially a multi-noun name which can be shortened to an acronym, you can present yourself as having discovered something real—something to impress the inspectors from the Research Excellence Framework.

Yes, the implication here is that academics are always trying to make things — a movie, a poem, themselves and their writing — appear more important than they actually are. These pieces also argue that academics dress simple concepts up in big words in order to exclude those who have not had access to the same educational expertise. In “On Writing Well,” Stephen M. Walt argues:

jargon is a way for professional academics to remind ordinary people that they are part of a guild with specialized knowledge that outsiders lack…

This is how we control the perimeters, our critics charge; this is how we guard ourselves from interlopers. But, this explanation seems odd. After all, the point of scholarship — of all those long hours of reading and studying and writing and editing — is to uncover truths, backed by research, and then to educate others. Sometimes we do that in the classroom for our students, of course, but even more significantly, we are supposed to be educating the world with our ideas. That’s especially true of academics (like me) employed by public universities, funded by tax payer dollars. That money, supporting higher education, is to (ideally) allow us to contribute to the world’s knowledge about our specific fields of study.

http://ougaz.wordpress.com/about/

So if knowledge-sharing is the mission of the scholar, why would so many of us consciously want to create an environment of exclusion around our writing? As Steven Pinker asks in “Why Academics Stink at Writing”

Why should a profession that trades in words and dedicates itself to the transmission of knowledge so often turn out prose that is turgid, soggy, wooden, bloated, clumsy, obscure, unpleasant to read, and impossible to understand?

Contrary to popular belief, academics don’t *just* write for other academics (that’s what conference presentations are for!). We write believing that what we’re writing has a point and purpose, that it will educate and edify. I’ve never met an academic who has asked for help with making her essay “more difficult to understand.” Now, of course, some academics do use jargon as subterfuge. Walt continues:

But if your prose is muddy and obscure or your arguments are hedged in every conceivable direction, then readers may not be able to figure out what you’re really saying and you can always dodge criticism by claiming to have been misunderstood…Bad writing thus becomes a form of academic camouflage designed to shield the author from criticism.

Walt, Billig, Pinker and everyone else who has, at one time or another, complained that a passage of academese was needlessly difficult to understand are right to be frustrated. I’ve made the same complaints myself. However, this generalized dismissal of “academese,” of dense, often-jargony prose that is nuanced, reflexive and even self-effacing , is, I’m afraid, just another bullet in the arsenal for those who believe that higher education is populated with up-tight, boring, useless pedants who just talk and write out of some masturbatory infatuation with their own intelligence. The inherent distrust of scholarly language is, at its heart, a dismissal of academia itself.

Now I’ll be the first to agree that higher education is currently crippled by a series of interrelated and devastating problems — the adjunctification and devaluation of teachers, the overproduction of PhDs, tuition hikes, endless assessment bullshit, the inflation of middle-management (aka, the rise of the “ass deans”), MOOCs, racism, sexism, homophobia, ablism, ageism, it’s ALL there people — but academese is the least egregious of these problems, don’t you think? Academese — that slow nuanced ponderous way of seeing the world — we are told, is a symptom of academia’s pretensions. But I think it’s one of our only saving graces.

The work I do is nuanced and specific. It requires hours of reading and thinking before a single word is typed. This work is boring at times — at times even dreadful — but it’s necessary for quality scholarship and sound arguments. Because once you start to research an idea — and I mean really research, beyond the first page of Google search results — you find that the ideas you had, those wonderful, catchy epiphanies that might make for a great headline or tweet, are not nearly as sound as you assumed. And so you go back, armed with the new knowledge you just gleaned, and adjust your original claim. Then you think some more and revise. It is slow work, but it’s necessary work. The fastest work I do is the writing for this blog, which as I see as a space of discovery and intellectual growth. I try not to make grand claims for this blog, mostly for that reason.

The problem then, with academic writing, is that its core — the creation of careful, accurate ideas about the world — are born of research and revision and, most important of all, time. Time is needed. But our world is increasingly regulated by the ethic of the instant. We are losing our patience. We need content that comes quickly and often, content that can be read during a short morning commute or a long dump (sorry for the vulagrity, Ma), content that can be tweeted and retweeted and Tumblred and bit-lyed. And that content is great. It’s filled with interesting and dynamic ideas. But this content cannot replace the deep structures of thought that come from research and revision and time.

Let me show you what I mean by way of example:

Stanley has already taken quite a drubbing for this piece (and deservedly so) so I won’t add to the pile on. But I do want to point out that had this profile been written by someone with a background in race and gender studies, not to mention the history of racial and gendered representation in television, this profile would have turned out very differently. I’m not saying that Stanley needed a PhD to properly write this piece, what I’m saying is: the woman needed to do her research. As Tressie McMillan Cottom explains:

Here’s the thing with using a stereotype to analyze counter hegemonic discourses. If you use the trope to critique race instead of critiquing racism, no matter what you say next the story is about the stereotype. That’s the entire purpose of stereotypes. They are convenient, if lazy, vehicles of communication. The “angry black woman” traffics in a specific history of oppression, violence and erasure just like the “spicy Latina” and “smart Asian”. They are effective because they work. They conjure immediate maps of cognitive interpretation. When you’re pressed for space or time or simply disinclined to engage complexities, stereotypes are hard to resist. They deliver the sensory perception of understanding while obfuscating. That’s their power and, when the stereotype is about you, their peril.

Wanna guess why Cottom’s perspective on this is so nuanced and careful? Because she studies this shit. Imagine that: knowing what you’re talking about before you hit “publish.”

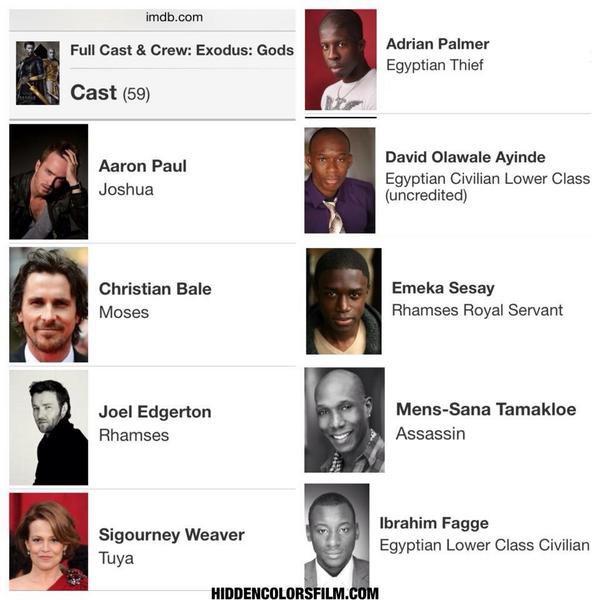

Or how about this recent piece on the “rise” of black British actors in America?

Carter’s profile of black British actors in Hollywood does a great job of repeating everything said by her interview subjects but is completely lacking in an analysis of the complicated and fraught history of black American actors in Hollywood. And that perspective is very, very necessary for an essay claiming to be about “The Rise of the Black British Actor in America.” So what is someone like Carter to do? Well, she could start by changing the title of her essay to “Black British Actors Discuss Working in Hollywood.” Don’t make claims that you can’t fulfill. Because you see, in academia, “The Rise of the Black British Actor in America” would actually be a book-length project. It would require months, if not years, of careful research, writing, and revision. One simply cannot write about hard-working black British actors in Hollywood without mentioning the ridiculous dearth of good Hollywood roles for people of color. As Tambay A. Obsenson rightly points out in his response to the piece:

Unless there’s a genuine collective will to get underneath the surface of it all, instead of just bulletin board-style engagement. There’s so much to unpack here, and if a conversation about the so-called “rise in black British actors in America” is to be had, a rather one-sided, short-sighted Buzzfeed piece doesn’t do much to inspire. It only further progresses previous theories that ultimately cause division within the diaspora.

But the internet has created the scholarship of the pastless present, where a subject’s history can be summed up in the last thinkpiece that was published about it, which was last week. And last week is, of course, ancient history. Quick and dirty analyses of entire decades, entire industries, entire races and genders, are generally easy and even enjoyable to read (simplicity is bliss!), and they often contain (some) good information. But many of them make claims they can’t support. They write checks their asses can’t cash. But you know who CAN cash those checks? Academics. In fact, those are some of the only checks we ever get to cash.

Academese can answer those broad questions, with actual facts and research and entire knowledge trajectories. As Obsensen adds:

But the Buzzfeed piece is so bereft of essential data, that it’s tough to take it entirely seriously. If the attempt is to have a conversation about the central matter that the article seems to want to inform its readers on, it fails. There’s a far more comprehensive discussion to be had here.

A far more comprehensive discussion is exactly what academics have been trained to do. We’re good at it! Indeed, Obsensen has yet to write a full response to the Buzzfeed piece because, wait for it, he has to do his research first: “But a black British invasion, there is not. I will take a look at this further, using actual data, after I complete my research of all roles given to black actors in American productions, over the last 5 years.” Now, look, I’m not shitting all over Carter or anyone else who has ever had to publish on a deadline in order to collect a paycheck. I understand that this is how online publishing often works. And Carter did a great job interviewing her subjects. Its a thorough piece that will certainly influence Buzzfeed readers to go see Selma (2015, Ava DuVernay). But it is not about the rise of the black British actor in America. It is an ad for Selma.

Now don’t get me wrong, I’m not calling for an end to short, pithy, generalized articles on the internet. I love those spurts of knowledge, bite-sized bits of knowledge. I may be well-versed in film and media (and really then, only my own small corner of it) but the rest of my understanding of what’s happening in the world of war and vaccines and space travel and Kim Kardashian comes from what I can read in 5 minute intervals while waiting for the pharmacist to fill my prescription. My working mom brain, frankly, can’t handle too much more than that. And that is how it should be; none among us can be experts in everything, or even a few things.

But here’s what I’m saying: we need to recognize that there is a difference between a 100,000 word academic book and a 1500 word thinkpiece. They have different purposes and functions and audiences. We need to understand the conditions under which claims can be made and what facts are necessary before assertions can be made. That’s why articles are peer-reviewed and book monographs are carefully vetted before publication. Writers who are not experts can pick up these documents and read them and then…cite them! In academia we call this “scholarship.”

No, academic articles rarely yield snappy titles. They’re hard to summarize. Seriously, the next time you see an academic, corner them and ask them to summarize their latest research project in 140 characters — I dare you. But trust me, people — you don’t want to call for an end to academese. Because without detailed, nuanced, reflexive, overly-cited, and yes, even hedging writing, there can be no progress in thought. There can be no true thinkpieces. Without academese, everything is what the author says it is, an opinion tethered to air, a viral simulacrum of knowledge.

2014 in Review

The WordPress.com stats helper monkeys prepared a 2014 annual report for this blog.

Here’s an excerpt:

The Louvre Museum has 8.5 million visitors per year. This blog was viewed about 99,000 times in 2014. If it were an exhibit at the Louvre Museum, it would take about 4 days for that many people to see it.

My Diane Rehm Fan Fiction is Live on Word Riot

Sometimes I try to write creative non-fiction. Luckily, the good folks at Word Riot, a site I greatly admire, thought this was acceptable for publication in their December 2014 issue. I’m super honored and would love if you’d read it. It’s about my idol, Diane Rehm.

The link to “Diane” is here.

Mascara Flavored Bitch Tears, or Why I Trolled #InternationalMensDay

Here are some recent news stories about women:

In Afghanistan, a 3-year-old girl was snatched from her front yard, where she was playing with friends, and raped in her neighbor’s garden by an 18-year-old man. The rapist then tried, unsuccessfully, to kill the child. Currently this little girl is in intensive care in Kabul, fighting for her life. But even if this little girl survives this horrifying experience, her parents tell the reporter, she will carry the shame and stigma of being raped for the rest of her life. The parents hope to bring the rapist to court, but as they are poor, they are certain their family will not receive justice. The child’s mother and grandmother have threatened to commit suicide in protest.

In Egypt,Raslan Fadl, a doctor who routinely performs genital mutilation surgery on women, was acquitted of manslaughter charges. Dr. Fadl performed the controversial surgery on 12-year-old Sohair al-Bata’a in June 2013 and she later died from complications stemming from the procedure. According to The Guardian, “No reason was given by the judge, with the verdict being simply scrawled in a court ledger, rather than being announced in the Agga courtroom.”

Washed up rapper, Eminem (nee Marshall Mathers), leaked portions of his new song, “Vegas,” in which he addresses Iggy Izalea (singer and appropriator of racial signifiers) thusly:

“Unless you’re Nicki

grab you by the wrist let’s ski

so what’s it gon be

put that shit away Iggy

You don’t wanna blow that rape whistle on me”

Azalea’s response was, naturally, disgust and a yawn:

This story was followed, finally, by a story on the growing sexual assault allegations against Bill Cosby. Cosby has been plagued by rumors of sexual misconduct for decades. However, a series of recent events, including Cosby’s ill-conceived idea to invite fans to “meme” him and Hannibal Buress’ recent stand up bit about the star, brought the issue back into the national spotlight. As Roxane Gay succinctly notes “There is a popular and precious fantasy that abounds, that women are largely conspiring to take men down with accusations of rape, as if there is some kind of benefit to publicly outing oneself as a rape victim. This fantasy becomes even more elaborate when a famous and/or wealthy man is involved. These women are out to get that man. They want his money. They want attention. It’s easier to indulge this fantasy than it is to face the truth that sometimes, the people we admire and think we know, are capable of terrible things.”

***

I cite these horrific stories happening all over the world, to women of all ages, races, and class backgrounds, because they are all things that happen to women because they are women. These are all crimes in which womens bodies are seen as objects for men to take and use as they wish simply because they can. The little girl in Afghanistan was raped because she has a vagina and because she is too small to defend herself. Cosby’s alleged victims were raped because they have vaginas and because they naive enough to assume that their boss — the humanitarian, the art collector, the seller of pudding pops — would not drug them. And Iggy Izalea, bless her confused little heart, makes a great point: why is it when men disagree with women, their first threat is one of sexual assault? Why doesn’t Eminem write lyrics about how Izalea is profiting off of another culture or that her music sucks? Because those critiques have nothing to do with Izalea’s vagina. If you want to disempower or threaten or traumatize a woman, you have to remind her she is, at the end of the day, nothing more than a vagina that can be invaded, pillaged and emptied into.

But you know this, don’t you, readers? Why am I reminding you of the fragile space women (and especially women of color) occupy in this world, of the delicate tightrope we walk between arousing the respect of our male peers and arousing their desires to violate our vaginas? Because of International Men’s Day.

“There’s an International Mens Day?” you’re asking yourself right now, “What does that entail?” Great question, hypothetical reader. This is from their official website:

“Objectives of International Men’s Day include a focus on men’s and boy’s health, improving gender relations, promoting gender equality, and highlighting positive male role models. It is an occasion for men to celebrate their achievements and contributions, in particular their contributions to community, family, marriage, and child care while highlighting the discrimination against them.”

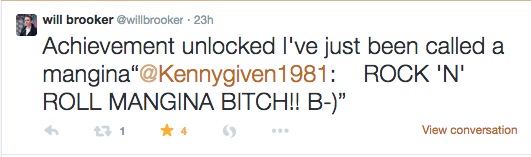

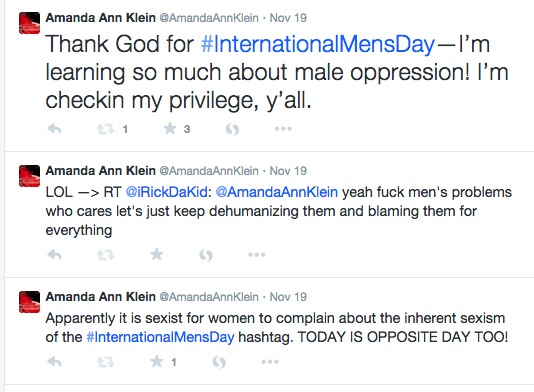

When I opened up my Twitter feed on Wednesday, I noticed the #InternationalMensDay hashtag popping up in my feed now and then, mostly because my friend, Will Brooker, was engaging many of the men using the hashtag in conversations about the meaning of the day and its possible ramifications.

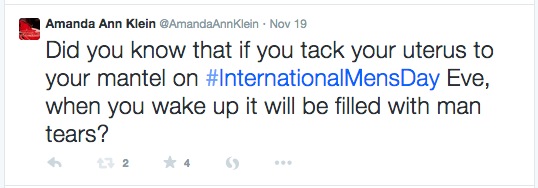

Now, I’m no troll (and neither is Will, by the way). Yes, I like to talk shit and I have been known to bust my friend’s chops for my own amusement (something I’ve written about in the past), but generally, I do not spend my time in real life or on the internet, looking for a fight. But International Mens Day struck me as so ill-conceived, so offensive, that I couldn’t help myself.

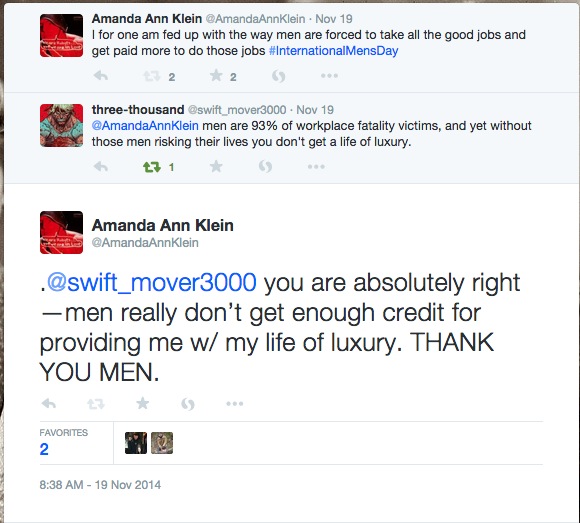

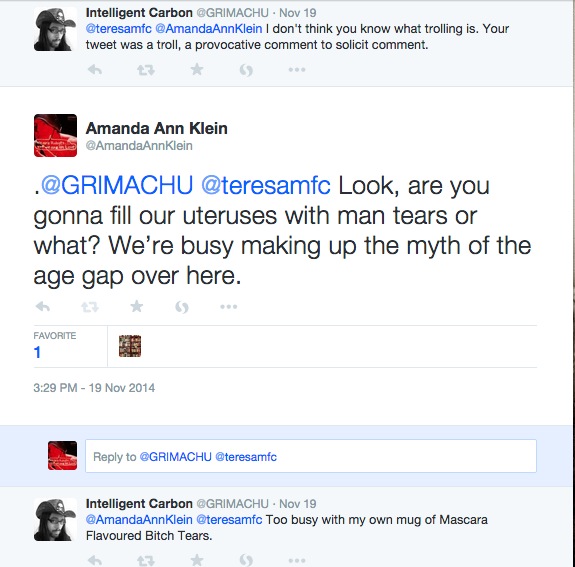

Within minutes I had several irate IMD supporters in my mentions:

I was informed that if you question the need for an International Mens Day, you actually *hate* men:

These men were outraged that I could so callously dismiss the very real problems men had to deal with on a day to day basis:

Yes apparently International Mens Day is needed because all of the feminists are sitting around cackling about the high rates of male suicide, or the fact that more men die on the job than women, or that more men are homeless than women. And since women have their own day on March 8th — and African Americans get the whole month of February! — then why can’t men have their own day, too? After all, men are people, right? Of course they are. But that’s not the point.

As a Huffington Post editorial put it:

“The problem with the IMD idea is that men’s vulnerabilities are not clearly and consistently put into the context of gender inequality and the ongoing oppression of women. For example, a review of homicide data shows that where homicide rates against men are high, violence against women by male partners is also high (and female deaths by homicides more likely to happen). Or, for example, men face particular health problems because we teach boys to be powerful men by suppressing a range of feelings, by engaging in risk-taking behaviors, by teaching them to fight and never back down, by saying that asking for help is for sissies — that is, the values of manhood celebrated in male-dominated societies come with real costs to men ourselves.”

Yes, the problem with IMD is that the real problems faced by men are not the direct result of the fact that they are men. Let me offer a personal example here to explain what I mean. I am a white, upper middle class, high-achieving white woman. According to studies, I am more likely to develop an eating disorder than other women. And eating disorders are very much tied to gender in that women face more pressure to be thin that men do. But does that mean there should be an entire day for white, upper middle class, high-achieving white women in order to bring awareness to the fact that we are more likely to acquire an eating disorder than others? No. Because the point of having a “day” or a “month” devoted to a particular group of people is to shed light on the unique challenges they face and the achievements they’ve made because otherwise society would not take notice of these challenges and achievements. Let me say that again: because otherwise society would not take notice of these challenges and achievements.

We do not need an International White, Upper Middle Class, High-Achieving White Woman Day because I see plenty of recognition of the challenges and achievements of my life; in the representation game, white women fall just behind white men in the amount of representation we get in the news and in popular culture. Likewise, we do not need an International Mens Day because, really, everyday is mens day. Every. Single. Day.

As more and more angry replies began to fill up my Twitter feed, I knew I should abandon ship. I would never convince these men that they do not need a day devoted to men’s issues since “men’s issues,” in our culture, are simply “issues.” But I couldn’t help myself. These men were so aggrieved, so very hurt that I could not see how they were victims, suffering in a world of rampant misandry:

I realize that giving an oppressed group of people their own day or month is a pretty pointless gesture. It could even be argued that these days serve to further marginalize groups by cordoning off their needs, their history, their lives, from the rest of the world. Still, after #gamergate and Time magazine readers voted to ban the word “feminism,” to name two recent public attacks against women, it’s hard for me not to see International Mens Day as an attack on women, and feminists in particular, like a tit for tat.

So yeah, I realize that by trolling the #InternationalMensDay hashtag I did little to promote the cause of feminism or to educate these men about why IMD might be problematic. But I didn’t do it to educate anyone or to promote a cause. I did it, you see, because sometimes in the face of absurdity, our only choice is to cloak ourselves in sarcasm and great big mugs of mascara flavored bitch tears.

Unbearable Whiteness of Gone Girls

Hullo dear readers. I wrote a short piece about whiteness, women and the missing persons narrative in popular media for Avidly, a site I very much admire. Here is an excerpt:

“I had barely considered the role whiteness plays in the missing persons narrative when I first read Gillian Flynn’s novel two years ago. Race comes up in the novel a couple of times but mostly Gone Girl is a story about white people who we are not necessarily cued to think of as being white. Their whiteness is not highlighted as something which has any bearing on the narrative events. Whiteness in the novel Gone Girl, as in so much of American mass culture, is a neutral character trait, the default setting on a character, the box that remains unchecked.”

Read the full essay here.

Debating the Return of Twin Peaks

You may have heard that Twin Peaks, beloved cult television of my adolescence, is getting a third season on Showtime. That won’t happen until 2016. In the meantime, I’m going to quietly weep about it. Why am I blue? I explain over at Antenna and talk about it with two other fans, Jason Mittell and Dana Och.

Here’s an excerpt:

“I started watching Twin Peaks when ABC aired reruns in the summer of 1990, after some of my friends started discussing this “crazy” show they were watching about a murdered prom queen. During the prom queen’s funeral her stricken father throws himself on top of her coffin, causing it to lurch up and down. The scene goes on and on, then fades to black.

I started watching based on that anecdote alone and was immediately hooked. Twin Peaks was violent, sexual, funny and sad, all at the same time – I was 13 and I kept waiting for some adult to come in the room and tell me to stop watching it. My Twin Peaks fandom felt intimate, and, most importantly, very illicit.

One month before I turned 14, Lynch’s daughter published The Secret Diary of Laura Palmer, a paratext meant to fill in key plot holes and offer additional clues about Laura’s murder. But really, it was like an X-rated Are You There God, It’s Me Margaret. The book was far smuttier than the show and my friends and I studied it like the Talmud. That book, coupled with Angelo Badalamenti’s soundtrack, which I played on repeat on my tapedeck, created my first true immersive TV experience.”

Read the whole thing here.

Understanding Your Academic Friend: Job Market Edition Part II

A few weeks ago I published part I of my 2-part post on the academic job market. I decided to break the post into two because when you write something like “part I of my 2-part post” it makes you sound important, like you have a real plan. Are you not impressed? These posts represent my attempts to translate the harrowing experience of applying for tenure track positions in academia in simple, easy-to-understand terms (and gifs) so that you, my dear suffering academic, can avoid this conversation with your Nana during Christmas dinner:

Nana: “Didja get that teachin’ job yet?”

You: “No, Nana, I’m still waiting to hear about first round interviews.”

Nana: “First round wha? I SAID: Didja get that teachin’ job yet?”

You: “No.”

Nana: “Boscovs is hirin'”

And then you go to Boscovs and grab an application because, you know, Boscovs!

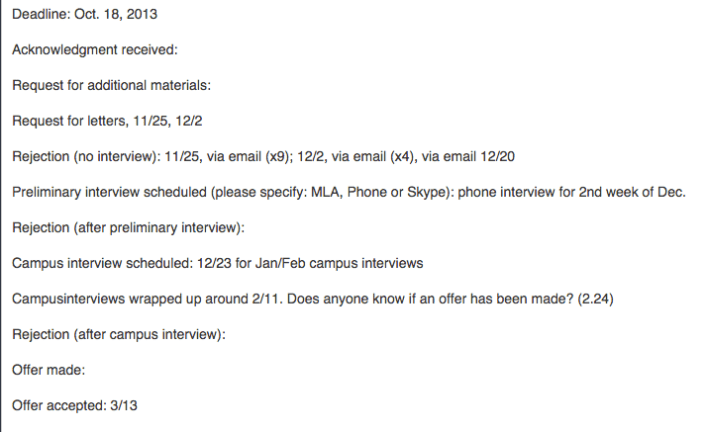

So where were we? I believe the last time we spoke, I was telling you all about the dark sad month of December, when most of your academic friends on the job market have hit peak Despair Mode. They’ve already sunk their heart and soul into those job applications and though they’ve likely heard *nothing* from the search committees yet, the Wiki gleefully marches forward with a parade of “MLA interviews scheduled!” and “campus interviews scheduled!.” So your friend, the job candidate, is going to be depressed, anxious and hopeful, all at the same time. Thus, your primary job during the month of December is to keep your friend very intoxicated and very far away from the Wiki. Can you handle that?

Preparing for the Conference Interview

Assuming your sad friend was able to schedule some first round interviews and assuming he has recovered from his massive December hangover, the next step in the job market process is interview prep. First, a word on the conference interview. Not every academic field requires job candidates to attend their annual conference for a face-to-face first round interview (like I mentioned in my last post, many schools have started offering the option of first round phone or Skype interviews as a substitute), but still, many many departments prefer to conduct first round interviews in the flesh. For folks who live within driving distance of these conferences and for whom the conference is always a yearly destination, the face-to-face interview is actually a great thing: being able to look the search committee in the eye as you speak (are they bored? excited? offended?) helps you gauge your answers and your tone. I, for one, think I’m much better in person than over the phone.

But, unfortunately, loads of folks don’t have the funds to attend these annual conferences *just* to interview for a single job. This is especially problematic because many search committees don’t contact candidates about conference interviews until a few weeks (or even a few days!) before the interview. If you ever tried to buy a plane ticket a few days before your departure date you know that this is prohibitively expensive. For example, one year I scored a first round MLA interview when it was being held in Los Angeles. The plane ticket cost me over $400, plus the cost of one night in a hotel and taxis, etc. It’s hard to imagine another field in which the (already financially strapped) job candidate must pay hundreds of dollars just to interview. Later I found out that some of the other candidates for the same job had requested (and received) first round interviews via Skype. When I ended up making it to the next and final round of that particular search, I wondered, briefly, if it was because I had been so willing to fork over $400 in order to have a shot at a single interview. This is just one example of how academia perpetuates a cycle of poverty and privilege. But I digress…

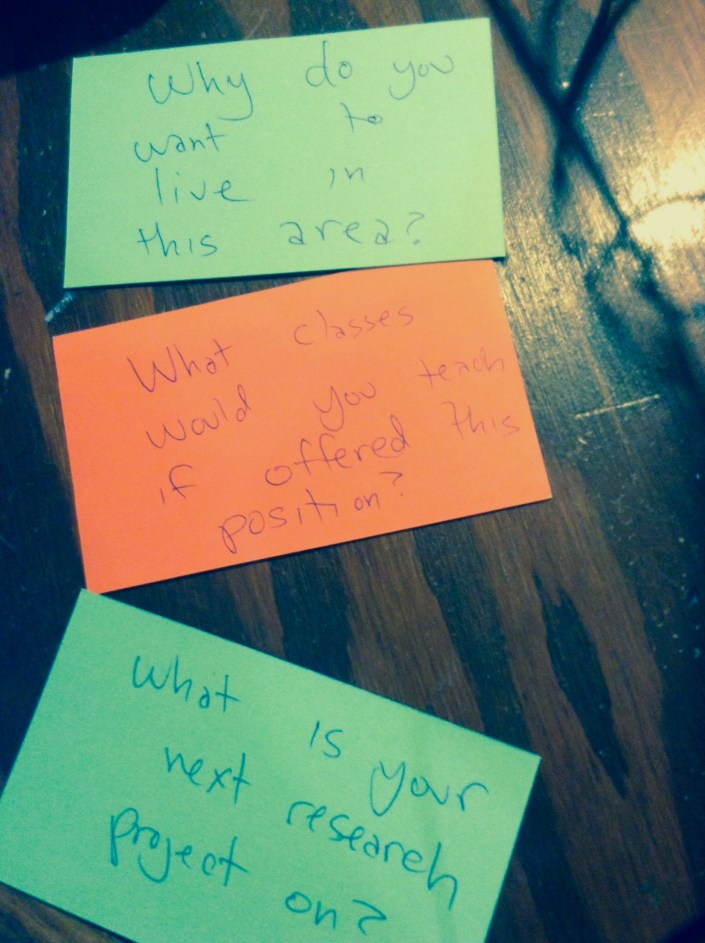

Where were we? Oh yes, preparing for the conference interview. Usually my tactic is to study the research profile of every member of the search committee, study the make up of the department and its courses, and compile a list of every possible question I might be asked during the 30 minute interview. Then I print all of that info onto note cards and spend the remaining days and hours leading up to the interview whispering sweet nothings over those notecards.

Attending the Conference Interview

If you are like me (and most academics I know), you really hate wearing a suit. It’s an outfit that communicates “I am not supposed to be wearing this but I put it on for you, Search Committee.” I own 3 suits and they all remind me of defeat.

http://www.corbisimages.com/

After donning your weird interview suit you head to the hotel where your interviews are being held. This is possibly the worst part of the conference interview: a lobby filled with shifty, big-suit-wearing, sullen academics who are all doing the same thing you’re doing: freaking the fuck out. The air is thick with perspiration but also something more ineffable than that, a pheromone possibly, that signals to everyone around you that your soul has been compromised. The stakes are so high (it’s your only interview in this job season!), the competition so great (all of these people are smart!), that the gravitas of the room feels wholly out of control but also wholly reasonable. You breathe in the fear of your cohort as you step into the crowded MLA elevators (so famous they have their own Twitter account) and that fear cloud follows you as you march down the carpeted hallway of the Doubletree Hotel, counting off room numbers until you reach the one containing your search committee. Often, as you’re about to knock, the previous job candidate is walking out. It’s very important that you try not to make eye contact with this individual or else you risk getting sucked into their vortex of anomie (pictured below):

image source:

http://williamlevybuzz.blogspot.com/

Now begins the oddest part of the conference interview: being alone in a hotel room with a group of punchy, overly caffeinated search committee members you’ve never met before. You may need to perch on a bed during the interview. Some members of the search committee may go to the potty in the middle of your schpiel on how you “flipped” your classroom or had your students teach you or whatever pedagogical bullshit is currently in vogue. Time will move much faster than you think it can and before you know it, your conference interview (the one you paid $400 for) is over. You nervously shake hands and slink out the door, trying to avoid eye contact with the sweaty mess waiting in the hall. Now you wait…

The Campus Interview

It may take days, weeks or possibly months, but eventually someone will contact you to say that you did not make it to the next round, thank you very much for your time, we wish you luck in your job search, etc. But, maybe, just maybe, you are one of the lucky few who moves on to the final round of the search: the campus interview! At this point the pile of candidates has been whittled down from 200-400 to just 3 or 4 candidates. I have been on a total of 8 campus interviews in my life and they run the gamut from positively delightful (swank hotel, great meals, gracious department members) to the miserable (the time I was told I’d be eating all of my meals on a 2-day interview “on my own” [except one] because the Search Committee was…too busy to eat with me? I saved all my receipts from the food court, trust me). But campus interviews generally include the following:

- Q & A with the Search Committee

- A teaching demonstration, followed by Q & A

- A research presentation, followed by Q & A

- Meet and greets with students

- Meeting with the dean/provost/generic white male in expensive suit who is way too busy to be meeting with you

- A tour of the campus

- Classroom visits

- Meeting with real estate agent/ tour of town

- Group meals with various department members

Campus interviews are also challenging because they need to occur when faculty and students are on campus, which means they happen during the semester, when the job candidate (whether she is a graduate student, TT professor, or contingent faculty) most likely has classes of her own to teach. So, for example, last year when I was on the market I had 3 campus interviews (yay!). But then I had to scramble to find colleagues who were willing and available to teach my classes for me (and yes, it’s really awkward to ask a co-worker to cover your class so that you can interview for another job. Thanks guys! <3). That also means you’ll be doing a lot of grading and course planning (not to mention interview prep — hello again, note cards!) on planes and in airports. During the month of February I was out of town more than I was in town.

Let me assure you that campus interviews aren’t inherently traumatic. In fact, they can be downright pleasant if you think of the campus interview as a 2 or 3-day party thrown in your honor during which people will ask all manner of questions about your research and teaching and your big old brain. It’s kind of an academic’s wet dream if I’m being honest. We lovelovelove talking about ourselves. One thing that makes the campus interview difficult, though, is that it requires you to perform your Best Self (the Self that is continuously charming, smart, ethical, engaged) all day, for several days in a row. When you wake in the morning at the Best Western you will pull your Best Self out of the closet and iron it. Throughout the day you will tug and pull at the Best Self, making sure it is neat and presentable and that Tired Self or I-Still-Have-Papers-to-Grade-for-my-Actual-Job Self or Your-Kids-Are-Crying-Because-This-Is-Your-Third-Trip-This-Month Self doesn’t peek through. It is a days long exercise in faking it.

In order to be a viable job candidate it is necessary to imagine yourself (I mean *really* imagine yourself) working at University X: teaching their students, collaborating with their faculty and staff, doing your research in their kickass libraries, etc. You need to make yourself fall in love with University X in order to make the Search Committee fall in love with you. And I suppose that’s why it hurts a little more to get rejected at this round than you might expect. Because when you get rejected, YOU get rejected. All of you. And that’s tough.

Campus interviews are also hard because so many things can and do go wrong — from travel mishaps to weather-related delays to folks (usually well-meaning members of the search committee) who say and do the wrong things at the wrong times. Below is a small sampling of some of the stories academics sent to me when I asked “What was your worst campus interview experience?”

“Of course I have a couple, but the one most worth talking about was this: An older male (tenured) faculty member who, while I was a captive audience in his car, said, ‘I know there are some questions that it’s illegal to ask you, but I don’t know what they are, so you’ll just need to tell me if I ask something inappropriate.’ Yes, please let me do all the work of managing the conversation, navigating complicated power structures that you’ve just managed to make even more tricky despite LAWS designed to keep you from doing so, and disciplining you as to correct behavior–all while trying to impress you so I might actually have a shot at paying off my student loans someday. Sheesh.”

“Following a [campus] interview, several weeks later, I’m called by a search committee member, who tells me clearly that he’s not offering me the job, since the decision isn’t yet made. But he wants to know whether I’d accept it if offered. I don’t understand, trying to be nice in saying, in effect, “why don’t you offer it to me and find out,” and he rambles on about junior candidates “playing” his university by not accepting jobs, and them not wanting to waste their time on me if I’m one of them. No salary is mentioned, no details offered — I’m just supposed to tell him there and then whether I’d accept.”

“I was on a campus visit and went to lunch at the swankiest restaurant in town. As I was served my quiche lorraine, I happened to notice an older man projectile vomiting into an empty pitcher. I was the only one who had this view, and it was all I could do to eat and smile and answer my seatmates’ questions while the staff cleaned up.”

“I get a call one afternoon from the search committee chair. I’m a finalist for the job (for which I wasn’t even phone interviewed, so I’m not expecting any of this), and she’s taken the liberty of booking me a flight. For the next week (!). She suggests she could change it “if I really need to,” but it’s clear what that’d mean to my candidacy. Said flight leaves at 11pm, connects in Chicago at around 3am, with a two hour layover, and I’ll be met at the airport by someone who’ll drive me the remaining hour. I am told I can sleep in the car, but of course I can’t actually do that. I’m then assured that since they know this flight “isn’t ideal,” on the first day, “all” they’ll ask of me is to have a lunch, an afternoon coffee with grad students, a dinner, and a meeting with the grad and undergrad committees who’ll “just” ask me what classes I could teach. When I arrive at the university, with it snowing outside, the inn they’ve put me up in doesn’t have my room ready (it’s ~8am), so I just have to sit in the lobby and explore the snowy environs for a couple of hours. And that meeting with the grad and undergrad cttes. turns out to be about 12-15 people, all of whom have questions for me, grilling me about the finer points of my diss and dense theoretical issues for 2 hours.”

“A university was flying me in for a Monday-Tuesday campus visit. They had me scheduled to arrive very late on Sunday, so that I’d get in after 1am. They had things scheduled at 8 the next morning, so, naturally, I asked if I could come earlier. They told me no. A flight delay meant that I first got to the hotel at 2:45 and the front desk was closed. I then had to frantically call the after-hours line, which advised that I walk 3 blocks — with my luggage, in the middle of the night — and get keys from another site that the company ran. I get in, go to bed, and am up at 7 for my first appointment at 8. The person never shows. I call the department, but b/c it’s early, they take a while to call me back. It was probably 8:45 when they tell me that I should walk — well over a mile — to campus to make sure I’m not late for my 9am meeting. I sweat through my shirt.”

“When I was still a green ABD [dissertation not completed], I found out I was a finalist for a position at a prestigious school, one which I didn’t think would even look at me twice. Even my dissertation chair was surprised that I was invited for a final round interview. My first night there I had dinner with the chair of the search committee who casually informed me that they had a visiting professor in the department who was also competing for the position. That helped to explain the aloof behavior of everyone I met the next day, as it was clear they all really liked the visiting prof and wanted her to keep her job. For example, after my teaching demostration the search comittee took me to a Chinese lunch buffet. During lunch everyone at the table talked only to each other about people and things I didn’t know. Or they were silent. I would occasionally try to break the silence by asking different people at the table about themselves or their families. They would answer me politely, then go silent again or start a private conversation with someone else at the table. This was super intimidating for a young, insecure scholar and so halfway through lunch I got up, went to the bathroom, and cried. Then I dried my tears, reapplied my make up and went back out to lunch. No one even noticed. I did not get the job but was pleased to hear that they offered the visiting prof the job and she turned them down for a better place.”

“I have a really wonderful MLA interview with the chair of the department. She’s really interesting, engaged, etc. The pay is terrible at this place, the course load enormous, and the town/village is not that great. But, I am excited–in part because I liked the chair so much. Note: chair is the only person at MLA. When I get there, I learn that a committee–not the department, not the chair, a committee of five–will exclusively vote on who gets the job. There is both a teaching and research talk. At the research talk, no one from the committee shows. I give a talk to three people. I have yet to meet the committee who’s voting. I don’t meet anyone on the committee for meals, coffee anything. They are the only one’s who are voting. I eventually have one large Q and A with the committee. That is the only time I see them. At the teaching talk, one member of the committee shows, for which I am absurdly grateful to him. It is clearly being implied (to the chair?) that they are choosing not to consider my candidacy at all. Yet I’m there for 2 and 1/2 days.”

“I went to my final dinner with 2 committee members and a person outside the department. One of the committee members was the only junior person [in my field] in the department. She seemed super stressed about tenure and her place at the uni. That should have been my first sign. She also asked me all kinds of badgering questions about my theoretical approach, training, etc. Needless to say, after two bottles of wine for the table (!!!), I went to visit the ladies room to take a breather. The junior member followed me into the bathroom to ask me why I want a job there, to talk shit about her department, and tell me that I could do better. The weirdest part is that the chair of search committee in my exit interview told me the job was mine and they really wanted to hire me. In the end, they offered it to someone else and those folks act like they have no idea who I am when I see them at conferences.”

“I could tell you my most horrific campus interview story was when a member of the search committee noticed I saw him picking his nose and then stopped taking to me. I could tell you my most horrific campus interview story was members of search committee made a toast to finding their new hire (i.e., me) and then called me two weeks later to say I didn’t get the job. I could tell you my most horrific interview story was when I had my bags packed to go interview for my dream job and the dean cancelled my interview. I could tell you my most horrific story was when my old department offered me my old job back but then rescinded the offer after I asked for $2,500 more. I could tell you my most horrific story was when a search committee chair called me one night and said it was down to me and another candidate — only to call the next day and say the committee was re-opening the search. But really, my most horrific interview story was when my current employer made me an offer, and I accepted it. The other stories are just that: stories. Comedic ones at this point. As the old saying goes, comedy is tragedy plus time. But my current job is just tragedy in the eternal present.”

Or this campus interview horror story here, a story which many commenters over at Inside Higher Ed thought was somehow exaggerated or false. But dear readers, I assure you it was not.

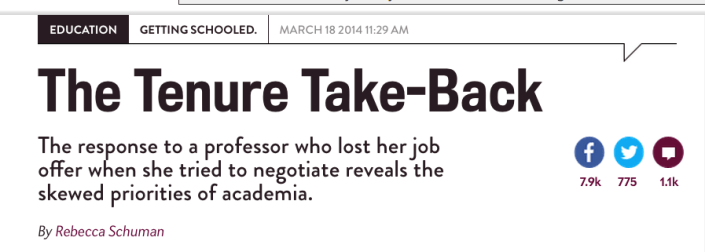

The Waiting, The Waiting, The Waiting

Well, this could go on for quite some time, I’m afraid. Remember that job that required a $400 plane ticket to Los Angeles? Well, after my campus interview I waited months for news. Then one day a form letter from the university’s Human Resources department arrived in my mailbox. It began “Dear Applicant,” and then informed me that the position for which I had been interviewing had been filled. That’s right, after months of interviewing, after flying roundtrip to LA for my first interview, and then flying again, mid-semester (they paid this time), for the final round interview, I didn’t even receive a rejection with my name on it. That was some major bullshit. There is also the famous case last year of a philosophy candidate who was offered a tenure track job, then had the offer rescinded when she asked for things like maternity leave and a course release. Lean in, my ass.

Yes, the waiting can take MONTHS. Because clearly hiring a professor requires the same timeline as vetting a Supreme Court Justice (actually it takes longer). We are that important, don’t you know? Then, one fine day you get that letter or email or (if you are super lucky) a phone call that says “I regret to inform you…” and then you know that 9 months of work have been in vain. So you take a deep breath and gird your loins because it’s now April and next year’s job season is already gearing up. Maybe you should try again, just one more time? I’ll bet your suit still fits.

And really, this gets to the core of the problem with the academic job market — the amount of preparatory work, the difficulty of making it to the next round, the days-long interviews, and then the waiting — all for a job that ultimately pays way less than you think it does. Keep in mind that the tenure track job — as a concept and as a reality — is slowly disappearing. As the old Catskills joke goes: “These jobs are terrible and there’s so few of them.”

***

So, there you have it: my comprehensive guide to the academic job search. What have I missed? What stories do you have to share (I’ll take good ones, too). Thanks for reading and happy job hunting. May the odds be ever in your favor.

Understanding Your Academic Friend: Job Market Edition

“If you and your spouse don’t like living 400 miles apart, why don’t you just get jobs at the same university?”

“You miss living near your mom? Well, there are like 5 colleges in her town — just work at one of those!”

“You still don’t know anything about that assistant professor job? Didn’t you apply to it 9 months ago?”

“Wow, your salary is terrible. Why don’t you work for a school that pays better wages?”

“Want me to talk to my friend’s mom, the dean at University X? I’ll bet she can hook you up with a job there and then we’ll live closer to each other!”

I’ve had to answer all of these questions — or some variation of them — ever since I completed my PhD 7 years ago and began looking for tenure track jobs. The people asking these questions are friends and family who love me very much but who just cannot understand why a “smart, hard-working” lass like me has such limited choices when searching for permanent employment as a professor. When I’m asked these questions I need to pause and take a deep breath because I know the rant that’s about to issue forth from my mouth is going to sound defensive, irate and even paranoid to my concerned listener. When I finish the rant, I know my concerned listener is going to slowly back away from me, all the while secretly dialing 9-1-1.

In the interest of generating a better understanding between academics and the people who love them, I’ve decided to write a post explaining exactly how the academic job market works for someone like me, a relatively intelligent, hard-working lady with a PhD in the humanities. My experiences do not, of course, represent the experiences of all academics hunting for jobs, nor do they represent the experiences of all humanities PhDs (they do, of course, represent the experiences of all humanities unicorns though). I think this post will prove useful for many academics as they return to the Fall 2014 Edition of the Job Market.

So, my dear academics, the next time a friend says “I just don’t understand why a smart, hard-working person like you can’t get a job,” you can just pull out your smart phone, load up this post, and then sit down and have a stress-free cocktail while I school your well-meaning friend/ mother-in-law/ neighbor about what an academic job search entails and, more importantly, how it feels. I should note that I have been successful on the job market (which is why I’m currently employed) but for the purposes of this post I’m going to describe (one of my) unsuccessful attempts at the job market, during the 2013-2014 season. Enjoy that sweet sweet schadenfreude, you vultures.

***

Spring 2013

Though job ads usually don’t go live until the fall, the academic job search usually begins the spring before. At this point all you really need to be doing is selecting three individuals in your field (preferably three TENURED individuals) who think you’re swell and ask them if they will write a letter of recommendation. It’s necessary to make this request months in advance of application deadlines since many of these folks are super busy. You should also lock yourself in your bedroom and do dips, Robert-De-Niro-in-Cape-Fear style, because upper body strength is important. Who knows what the fall may bring.

Summer 2013

Job ads still haven’t been posted yet, but at this point any serious job candidate is working on her job materials. These are complex documents with specific (and often contradictory) rules and limits. Here’s a breakdown of some (not all, no, there will be so much more to write and obsess over once actual job ads are posted) of the documents the academic must prepare in advance of the job season:

1. The Cover Letter

The cover letter is a nightmare. You have 2 pages (single spaced, natch) to tell the search committee about: who you are, where you were educated, why you’re applying to this job, why you’re a good fit for this job, all the research you published in the past and why it’s important, all the research you’re working on now and why that’s important, the classes you’ve taught and why you’ve taught them, the classes you could teach at University X, if given the chance, and your record of service. You explain all of this without underselling OR overselling yourself and you must write it in such a way that the committee won’t fall asleep during paragraph two (remember, most of these jobs will have anywhere from 200-400 applicants so your letter must STAND OUT). You will draft the cover letter, then redraft it, then send it to a trusted colleague, revise it a few more times, send it to several more trusted colleagues (henceforth TCs), obsess, weep, and revise it one more time. Then more De Niro dips.

2. The CV

The curriculum vitae is not a resume. Whereas the primary virtue of a resume is its brevity, the curriculum vitae goes on and on and on. Most academics keep their CVs fairly up-to-date, so getting the CV job market ready isn’t very time-consuming. Still, it’s always a good idea to send it along to some TCs for feedback and copyediting. And don’t worry about those poor, overworked TCs: academics love giving other academics job market advice almost as much as mothers like to share labor and delivery stories with other mothers. There is unity in adversity. We also drink in the pain of others like vampires.

3. Statement of Teaching Philosophy

The statement of teaching philosophy (aka, teaching statement) is basically a narrative that details your approach to education in your field. You usually offer examples from specific classes and explain why your students are totally and completely engaged with the amazing lessons and assignments you have created for them. What’s super fun about these documents is that every school you apply to will ask for a slightly different version (and some, bless them, might not request it at all). Some search committees want a one-page document and others want two-page documents and still others don’t specify length at all (a move designed specifically to fuck with the perpetually anxious job candidate). Some search committees might ask that you submit a combined teaching and research statement, which, as you might guess, is the worst. So when you draft this document in the summer it’s just that: a draft. It’s preemptive writing. And it’s only just begun.

4. Statement of Research Interests

You know all the stuff you said about your research in the cover letter? Well say all of that again, only use different words and use more of them. This document could literally be any length come fall so just settle in, cowboy.

August 2013

Job ads have been posted! JOB ADS HAVE BEEN POSTED! JOB. ADS. HAVE. BEEN. POSTED.

Commence obsessing.

September 2013

At this point job ads are appearing in dribs and drabs, so you’re able to apply to them fair quickly. If you were obsessive in preparing your materials over the summer, your primary task now is to tailor each set of materials to every job ad. This process involves: researching the individual department you’re applying to as well as the university, hunting down titles and descriptions of courses you might be asked to teach, and poring over every detail of the job ad to ensure that your materials appear to speak to their specific (or as it may be, general) needs. This takes more time than you think it will.

Also keep in mind that every ad will ask for a slightly different configuration of materials. Some search committees are darlings and only ask for a cover letter and CV for the first round of the search, while others ask for cover letter, CV, letters of recommendation, writing samples, teaching statements and all the lyrics to “We Didn’t Start the Fire.”

It’s also important to keep in mind that most folks on the academic job market are dissertating and/or teaching or, if you’re like me, already have a full-time job (and kids). But still, things haven’t gotten too stressful yet — the train’s barely left the station.

October-November 2013

Loads of jobs have been posted over the last few months and you are applying to ALL OF THEM. Well-meaning friends will send you emails with hopeful subject lines like “This job seems perfect for you!” and a link to a job you will not get. You apply to it anyway.

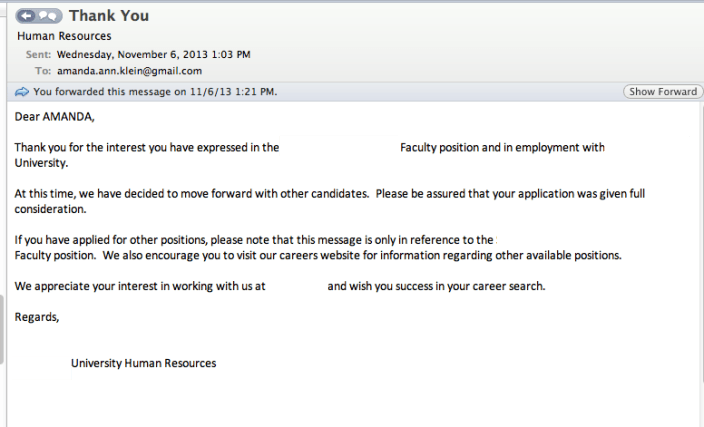

Also? Remember, all those jobs you applied to back in September? Well, right now you might also start receiving automated rejection emails that look something like this:

Neat huh?

If you are lucky, though, the search committee will send you an email asking you to “submit more materials” — Ah, it feels good even to type those words — and at that point you do a happy, submit-more-materials jig in front of your computer. Yay! More materials! They like me!

Every search committee will ask for something different at this point. Almost every school requests a writing sample and letters of recommendation at this stage. Some schools will ask the candidate to submit sample syllabi while still others ask for the candidate to design an entirely new syllabus. It’s kind of a free-for-all.

Oh, you might *also* be doing phone or Skype interviews with departments that don’t attend the annual MLA convention in early January, where many humanities-based schools conduct face-to-face first round interviews (more on those later). It’s far more humane to allow candidates to interview from home, so I’m always pleased when this is presented as an option. Of course, interviewing from home generates its own share of problems when, for example, your cat and your toddler simultaneously demand entrance to your office in the middle of a Skype interview for which you have put on a pressed button-down, suit jacket and a pair of pajama pants.

December 2013

Ah December, December. As the days get shorter, the Job Wiki gets longer. Most job candidates now have a pretty good idea about how the market is “going.” Spoiler alert: it is going terribly.

Even if you haven’t received a lot of rejections yet, it doesn’t mean you haven’t been rejected dozens of times. It just means that the university is going to wait until an offer is made and accepted by The One in the spring before sending you the automated rejection notice I posted above. Usually though, we don’t need to wait that long. If University X has already contacted the standard 10-15 candidates for first round interviews (which you know because you check the goddamn Wiki every day 5 minutes) and they haven’t contacted you (which you know because you checked your spam folder twice and had your husband call your phone to be certain that it was working properly), then baby, you’re out.

Yes, December is a dark month for the job market candidate. As the winter holidays arrive, your dear academic friend has invested over six months in a job search which has, at best, offered ambiguity and at worst, pummeled her with outright rejection. Your friend, if she’s lucky, has some MLA interviews scheduled by now or maybe even … a final round interview! … lined up for just after the holidays. So try to pull her away from her interview flashcards. Treat her with care. Make her get drunk with you the day after Christmas in some crappy bar you two liked to frequent in your younger, more carefree days because listen: shit is about to get real for your friend.

to be continued…

[Part II of “Understanding Your Academic Friend: Job Market Edition” or “When Shit Gets Real” is now up. Click here to read. ]

So academic friends, have any to add to this timeline? What else should the friends and family of job-seeking academics (henceforth FFJSA) know before the job season begins in earnest next month? Share below…

Tell Us A Story is Back!

I don’t usually cross post between blogs, but since Tell Us A Story returns today from summer vacation, I wanted to give them a shout out. I also wanted to encourage all of you readers to submit your true stories HERE.

To kick off the 2014-2015 season, Adam Rose brings us chemotherapy two ways and tells us exactly what it’s like to pump poison through your body:

“It’s been two days since my third round of chemotherapy. I needed two Ativan on my way to treatment in hopes that they would keep me calm enough for Roxanne and Mark to insert the tube into a vein. Turns out it was a two person job even with the dopey drug running through my system. The Ativan made my body slow down and my mind fuzz over like frost on a windshield. I squeezed Mark’s hand to pump up the reluctant vessels while focusing on the painting of a rodeo clown leaping over a bull. Roxanne struggled to find a vein that was relaxed enough for the needle.”

Click HERE to read the rest.

Notes on a Riot

image source: PBS news hour

Like many professors, I live on the same campus where I work. As a result, I’ve watched drunk East Carolina University students urinate and puke on my lawn and toss empty red solo cups into the shrubbery around my home. But one evening I had a more troubling run-in with a college student. It began when I woke to the sound of my dog barking. It took me a minute to orient myself and understand that my dog was barking because someone was knocking on the front door. It was 2 am and my husband was out of town, but I opened the front door anyway. On the stoop was a college-aged woman dressed in a Halloween costume that consisted of a halter top, small tight shorts, and sky-high heels. The woman was sobbing and shivering in the late October air and her thick eye make up was running down her face. She was incoherent and hysterical– I could smell the tequila on her breath — so it took me a while to figure out what she wanted .

She told me that she was visiting a friend for the night and that she had lost her friend…and her cell phone. She had no idea where she was or where to go. I think she came to my door because my porch light has motion detectors and she must have thought it was a sign. As she rambled on and on I could hear my baby crying upstairs. I told the woman to wait on my stoop, that I had to go get my baby and my phone, and that I would call the police to see if they could drive her somewhere. “Nooooooo,” she wailed, “don’t call the police!” I urged her to wait a minute so I could go get my baby and soothe him, but when I returned a few minutes later with my cell phone in hand, she was gone.

image source: http://www.nydailynews.com/

I felt many emotions that night: annoyance at being woken up, panic over how to best get help for the young woman, and later, guilt over my inability to help her. But one emotion that I did not feel that night was fear. I was never threatened by this young woman’s presence on my stoop and I never felt the need to “protect” my property. Why would I? She was a young woman, no more than 19 or 20, and though she was drunk and hysterical, she needed my help. I was reminded of this incident when I heard that Renisha McBride, a young woman of no more than 19 or 20, was shot dead last fall after knocking on Theodore Wafer’s door in the middle of the night while drunk and in need of help. Wafer was recently convicted of second-degree murder and manslaughter (which is a miracle), but that didn’t stop the Associated Press from describing the Wafer verdict thusly:

McBride, the victim, a young girl needlessly shot down by a paranoid homeowner, is described as a nameless drunk, even a court ruling establishing her victimhood beyond a shadow of a doubt. Now, just a few days later in Ferguson, Missouri, citizens are actively protesting the death/murder of Michael Brown, another unarmed African American youth shot down for seemingly no reason. If you haven’t heard of Brown yet, here are the basic facts:

1. On Saturday evening Michael Brown, an unarmed African American teenager, was fatally shot by a police officer on a sidewalk in Ferguson, a suburb of St. Louis, Missouri.

2. There are 2 very different accounts of why and how Brown was shot. The police claim that Brown got into their police car and attempted to take an officer’s gun, leading to the chain of events that resulted in Brown fleeing the vehicle and being shot. By contract, witnesses on the scene claim that Brown and his friend, Dorian Johnson, were walking in the middle of the street when the police car pulled up, told the boys to “Get the f*** on the sidewalk” and then hit them with their car door. This then led to a physical altercation that sent both boys running down the sidewalk with the police shooting after them.

3. As a result of Brown’s death/murder the citizens of Ferguson took to the streets, demanding answers, investigations, and the name of the officer who pulled the trigger. Most of these citizens engaged in peaceful protests while others have engaged in “looting” (setting fires, stealing from local businesses, and damaging property).

http://blogs.riverfronttimes.com/

Now America is trying to make sense of the riots/uprisings that have taken hold of Ferguson the last two days and whether the town’s reaction is or is not “justified.” Was Brown a thug who foolishly tried to grab an officer’s gun? Or, was he yet another case of an African American shot because his skin color made him into a threat?

I suppose both theories are plausible, but given how many unarmed, brown-skinned Americans have been killed in *just* the last 2 years — Trayvon Martin (2012), Ramarley Graham (2012), Renisha McBride (2013), Jonathan Ferrell (2013), John Crawford (2014), Eric Garner (2014), Ezell Ford (2 days ago) — my God, I’m not even scratching the surface, there are too many to list — I’m willing to bet that Michael Brown didn’t do anything to *deserve* his death. He was a teenage boy out for a walk with his friend on a Saturday night and his skin color made him into a police target. He was a threat merely by existing.

Given the amount of bodies that are piling up — young, innocent, unarmed bodies — it shouldn’t be surprising that people in Ferguson have taken to the streets demanding justice. And yes, in addition to the peaceful protests and fliers with clearly delineated demands, there has been destruction to property and looting. But there is always destruction in a war zone. War makes people act in uncharacteristic ways. And make no mistake: Ferguson is now a war zone. The media has been blocked from entering the city, the FAA has declared the air space over Ferguson a “no fly zone” for a week “to provide a safe environment for law enforcement activities,” and the police are shooting rubber bullets and tear gas at civilians.

But no matter. The images of masses of brown faces in the streets of Ferguson can and will be brushed aside as “looters” and “f*cking animals.” Michael Brown’s death is already another statistic, another body on the pile of Americans who had the audacity to believe that they would be safe walking down the street or knocking on a door for help.

What is especially soul-crushing is knowing that these events happen over and over and over again in America — the Red Summer of 1919, Watts in 1964, Los Angeles in 1992 — and again and again we look away. We laud the protests of the Arab Spring, awed by the fortitude and bravery of people who risk bodily harm and even death in their demands for a just government, but we have trouble seeing our own protests that way. Justice is a right, not a privilege. Justice is something we are all supposed to be entitled to in this county.

***

When the uprisings in Los Angeles were televised in 1992 I was a freshman in high school. All I knew about Los Angeles is what I had learned from movies like Pretty Woman and Boyz N the Hood –there were rich white people, poor black people with guns, and Julia Roberts pretending to be a prostitute. On my television these “rioters and looters” looked positively crazy, out of control. And when I saw army tanks moving through the streets of Compton I felt a sense of relief.

That’s because a lifetime of American media consumption — mostly in the form of film, television, and nightly newscasts — had conditioned my eyes and my brain to read images of angry African Americans, not as allies in the struggle for a just country, but as threats to my country’s safety. I could pull any number of examples of how and why my brain and eyes were conditioned in this way. I could cite, for example, how every hero and romantic lead in everything I watched was almost always played by a white actor. I could cite how every criminal, rapist, and threat to my white womanhood was almost always played by a black actor. And those army tanks driving through the outskirts of Los Angeles didn’t look like an infringement on freedom to me at the time (and as they do now). They looked like safety because I came of age during the Gulf War, when images of tanks moving through wartorn streets in regions of the world where people who don’t look like me live come to stand for “justice” and “peacemaking.” Images get twisted and flipped and distorted.

bossip.com

The #IfTheyShotMe hashtag, started by Tyler Atkins, illuminates how easily images — particular the cache of selfies uploaded to a Facebook page or Instagram account — can be molded to support whatever narrative you want to spin about someone. The hashtag features two images which could tell two very different stories about an unarmed man after he is shot — a troublemaker or a scholar? a womanizer or a war vet? The hashtag illuminates how those who wish to believe that Michael Brown’s death was simply a tragic consequence of not following rules and provoking the police can easily find images of him flashing “gang signs” or looking tough in a photo, and thus “deserving” his fate. Those who believe he was wrongfully shot down because he, like most African American male teens, looks “suspicious,” can proffer images of Brown in his graduation robes.

Of course, as so many smart folks have already pointed out, it doesn’t really matter that Brown was supposed to go off to college this week, just as it doesn’t matter what a woman was wearing when she was raped. It doesn’t matter whether an unarmed man is a thug or a scholar when he is shot down in the street like a dog. But I like this hashtag because at the very least it is forcing us all to think about the way we’re all (mis)reading the images around us, to our peril.

The same day that the people of Ferguson took to the streets to stand up for Michael Brown and for every other unarmed person killed for being black, comedian and actor Robin Williams died. I was sad to hear this news and even sadder to hear that Williams took his own life, so I went to social media to engage in some good, old fashioned public mourning, the Twitter wake. In addition to the usual sharing of memorable quotes and clips from the actor’s past, people in my feed were also sharing suicide prevention hotline numbers and urging friends to “stay here,” reminding them that they are loved and needed by their friends and families. People asked for greater understanding of mental illness and depression. And some people simply asked that we all try to be kind to each other, that we remember that we’re all human, that we all hurt, and that we are all, ultimately, the same. Folks, now it’s time to send some of that kind energy to the people of Ferguson and to the family and friends of Michael Brown. They’re hurting and they need it.